Best practices for SQL Server instances

These are a few of the Best Practices to keep up the performance better in SQL Server Instances

Configuring Windows

This section covers configuration topics about how to optimize the Microsoft Windows operating system for SQL Server performance when running on Compute Engine.

Setting up Windows firewall

Best practice: Use the Windows Server Advanced Firewall, and specify the IP addresses of your client computers.

The Windows Advanced Firewall is an important security component in Windows Server. When you set up your SQL Server environment so that it can connect to the database from other client machines, configure the firewall to allow incoming traffic:

netsh advfirewall firewall add rule name="SQL Access" ^

dir=in action=allow ^

program="%programfiles%\Microsoft SQL Server\MSSQL12.MSSQLSERVER\MSSQL\Binn\sqlservr.exe" ^

remoteip=LOCAL_SUBNET

When you use this firewall rule, it is a good practice to specify the IP address of your client machines. Specify a comma-delimited list of IP addresses without blank spaces for the remoteip parameter in place of LOCAL_SUBNET. Also, note that the path for the program parameter might change depending on the version of SQL Server that you use.

The SQL Server application image includes a SQL Server Windows firewall rule. This rule is fairly unrestricted, so consider disabling it before your system goes to production.

Tuning network connections

Best practice: Use the operating system’s default network settings.

The default network settings on most operating systems are configured for connections on small computers that are connected to moderately fast networks. Such settings are usually sufficient. Furthermore, conservative defaults make sure that network traffic doesn’t overwhelm the network and connected computers.

Installing antivirus

Best practice: Follow the Microsoft guidance for antivirus software.

If you are running Windows, you should be running some antivirus software. Malware and software viruses present a significant risk to any system connected to a network, and antivirus software is a simple mitigation step you can use to protect your data. However, if the antivirus software is not configured correctly, it can negatively impact your database performance.

Optimizing for performance and stability

This section provides information about how to optimize SQL Server performance on Compute Engine and describes operational activities to help keep it running smoothly.

Moving data files and log files to a new disk

Best practice: Use a separate SSD persistent disk for log and data files.

By default, the preconfigured image for SQL Server comes with everything installed on the boot persistent disk, which mounts as the `C:` drive. Consider attaching a secondary SSD persistent disk and moving the log files and data files to the new disk.

Using a Local SSD to improve IOPS

Best practice: Create new SQL Server instances with one or more local SSDs to store the tempdb and Windows paging files.

The ephemeral nature of local SSD technology makes it a poor candidate for use with your critical databases and important files. However the tempdb and Windows paging files are both temporary files, so both are great candidates to move to a local SSD. This offloads a significant number of I/O operations from your SSD persistent disks.

Setting up TempDB

It used to be a best practice to optimize the SQL Server CPU usage by creating one TempDB file per CPU. However, because CPU counts have grown over time, following this guideline can cause performance to decrease. As a good starting point, use 4 TempDB files. As you measure your system’s performance, in rare cases you might need to incrementally increase the number of TempDB files to a maximum of 8.

Parallel query processing

Best practice: Set the max degree of parallelism to 8.

The recommended default setting for the max degree of parallelism is to match it to the number of CPUs on the server. However, there is a point where breaking a query into 16 or 32 chunks, executing them all on different vCPUs, and then consolidating it all back to a single result takes a lot more time than if only one vCPU had run the query. In practice, 8 works as a good default value.

Best practice: Monitor for CXPACKET waits and incrementally increase the cost threshold for parallelism.

This setting goes hand in hand with the max degree of parallelism. Each unit represents a combination of CPU and I/O work required to perform a query with a serial execution plan before it is considered for a parallel execution plan. The default value is 5. Although we give no specific recommendation to change the default value, it is worth keeping an eye on and, if necessary, increasing it incrementally by 5 during load testing. One key indicator that this value might need to be increased is the presence of CXPACKET waits. Although the presence of CXPACKET waits does not necessarily indicate that this setting should change, it’s a good place to start.

Best practice: Monitor for different wait types, and adjust the global parallel processing settings or set them at the individual database level.

Individual databases can have different parallelism needs. You can set these settings globally, and set Max DOP at the individual database level. You should observe your unique workloads, monitor for waits, and then adjust the values accordingly.

Handling transaction logs

Best practice: Monitor the growth of the transaction log on your system. Consider disabling autogrowth and setting your log file to a fixed size, based on your average daily log accumulation.

One of the most overlooked sources of performance loss and intermittent slowdowns is the unmanaged growth of the transaction log. When your database is configured to use the Full recovery model, you can perform a restore to any point in time, but your transaction logs fill up faster. By default, when the transaction log file is full, SQL Server increases the size of the file to add more empty space to write more transactions and blocks all activity on the database until it finishes. SQL Server grows each log file based off of its Maximum File Size and the File Growth setting.

When the file has reached its maximum size limit and cannot grow, the system issues a 9002 error

( When the transaction log becomes full, SQL Server Database Engine issues a 9002 error. The log can fill when the database is online, or in recovery. If the log fills while the database is online, the database remains online but can only be read, not updated. If the log fills during recovery, the Database Engine marks the database as RESOURCE PENDING. In either case, user action is required to make log space available.)

and puts the database into read-only mode. If the file can grow, SQL Server expands the file size and zeroes out the empty space. The setting for File Growth defaults to 10% of the log file’s current size. This is not a good default setting for performance because the larger your file grows, the longer it takes to create the new, empty space.

Best practice: Schedule regular backups of the transaction log.

Regardless of the maximum size and growth settings, schedule regular transaction log backups, which, by default, truncates old log entries and lets the system reuse existing file space. This simple maintenance task can help to avoid performance dips at your peak traffic times.

Optimizing Virtual Log Files

Best practice: Monitor Virtual Log File growth and take action to prevent log file fragmentation.

The physical transaction log file is segmented into Virtual Log Files (VLF). New VLFs are created every time the physical transaction log file has to grow. If you did not disable auto-growth, and growth is happening too frequently, too many VLFs are created. This activity can result in log file fragmentation, which is similar to disk fragmentation and can adversely affect performance.

SQL Server 2014 introduced a more efficient algorithm for determining how many VLFs to create during auto-growth. Generally, if the growth is less than 1/8 the size of the current log file, SQL Server creates one VLF within that new segment. Previously, it would create 8 VLFs for growth between 64 MB and 1 GB, and 16 VLFs for growth over 1 GB. You can use the TSQL script below to check how many VLFs your database currently has. If it has thousands of files, consider manually shrinking and resizing your log file.

–Check VLFs and substitute your database name below

USE YOUR_DB

DECLARE @vlf_count INT

DBCC LOGINFO

SET @vlf_count = @@ROWCOUNT

SELECT VLFs = @vlf_count

Avoiding index fragmentation

Best practice: Regularly defragment the indexes on your most heavily modified tables.

The indexes in your tables can become fragmented, which can lead to poor performance of any queries using these indexes. A regular maintenance schedule should include reorganizing the indexes on your most heavily modified tables. You can run the following Transact-SQL script for your database to show the indexes and their fragmentation percentage. You can see in the example results that the PK_STOCK index is 95% fragmented. In the following ‘SELECT’ statement, replace ‘YOUR_DB’ with the name of your database:

SELECT stats.index_id as id, name, avg_fragmentation_in_percent

FROM sys.dm_db_index_physical_stats (DB_ID(N'YOUR_DB'), NULL, NULL, NULL, NULL) AS stats

JOIN sys.indexes AS indx ON stats.object_id = indx.object_id

AND stats.index_id = indx.index_id AND name IS NOT NULL;

RESULTS

-------------------------------

Id name avg_fragmentation_in_percent

-------------------------------

1 ORDERS_I1 0

2 ORDERS_I2 0

1 ORDER_LINE_I1 0.01

1 PK_STOCK95.5529819557039

1 PK_WAREHOUSE0.8

When your indexes are too fragmented, you can reorganize them by using a basic ALTER script. Here is an example script that prints the ALTER statements you can run for each of your tables’ indexes:

SELECT

'ALTER INDEX ALL ON ' + table_name + ' REORGANIZE;

GO'

FROM INFORMATION_SCHEMA.TABLES WHERE TABLE_TYPE = 'BASE TABLE' AND TABLE_CATALOG = 'YOUR_DB'

Choose the tables from the result set that have the highest fragmentation, and then execute those statements incrementally. Consider scheduling this or a similar script as one of your regular maintenance jobs.

If you are restoring a database from another location to Google Cloud, you should rebuild, or at least reorganize, the indexes, then update usage. Replace YOUR_DB with the name of your database:

dbcc updateusage (YOUR_DB) GO USE YOUR_DB

GO

Formatting secondary disks

Best practice: Format secondary disks with a 64 KB allocation unit.

SQL Server stores data in units of storage called extents. Extents are 64 KB in size and are made up of eight, contiguous memory pages that are also 8 KB in size. Formatting a disk with a 64 KB allocation unit lets SQL Server read and write extents more efficiently, which increases I/O performance from the disk.

To format secondary disks with a 64 KB allocation unit, run the following PowerShell command, which searches for all new and uninitialized disks in a system and formats the disks with the 64 KB allocation unit:

Get-Disk | Where-Object {$_.PartitionStyle -eq 'RAW'} | Initialize-Disk -PartitionStyle GPT -PassThru | New-Partition -AssignDriveLetter -UseMaximumSize | Format-Volume -FileSystem NTFS -AllocationUnitSize 65536 -Confirm:$FALSE

Backing up

Best practice: Have a plan for backups and perform backups regularly.

Ola Hallengren’s site provides a good starting point for understanding how to implement a solid backup and maintenance plan.

When taking regular database backups, be careful not to consume too many persistent disk IOPS. Use the local SSD to stage your backups and then push them to a Cloud Storage bucket.

Bulk-loading data

Best practice: Use a separate database to stage and transform bulk data before moving it to production servers.

It’s likely that you will need to load large amounts of data into your system at least once, if not regularly. This is a resource-intensive operation, and you might reach the persistent disk IOPS limit when you do bulk loads.

There is an easy way to cut down on the disk I/O and CPU consumption of bulk load operations, with the added benefit of speeding up the execution time of your batch jobs. The solution is to create a completely separate database that uses the Simple recovery model, and then use that database for staging and transforming the bulk dataset before you insert it into your production database. You can also put this new database on a local SSD drive if you have enough space. Using a local SSD for the recovery database reduces the resource consumption of your bulk operations and the time required to complete the jobs. The final benefit is that your backup job for the production data won’t have to back up all those bulk operations in the transaction log, and therefore it will be smaller and run faster.

Note: Bulk Logged is another recovery model that you might consider for your bulk loading operations. It uses more disk space, more disk I/O, and requires you to regularly run backup jobs on your transaction logs, but it is not as thorough as the Full recovery model.

Validating your setup

Best practice: Test your configuration to validate that it performs as expected.

Whenever you set up a new system, you should plan on validating the configuration and running some performance tests. This stored procedure is a great resource for evaluating your SQL Server configuration. Take some time later to read about the configuration flags, and run the procedure.

Using compressed tables

Best practice: Enable table and index compression.

It might seem counterintuitive that compressing tables could make your system perform faster, but, in most cases, that’s what happens. The tradeoff is using a small number of CPU cycles to compress the data and eliminate the extra disk I/O required to read and write the bigger blocks. Generally, the less disk I/O your system uses, the better its performance.

Note: The table and index compression options are also available for SQL Server Standard Edition 2016 SP1 and later.

Enabling the buffer pool extension

Best practice: Use the buffer pool extension to speed data access.

The buffer pool is where the system stores clean pages. In simple terms, it stores copies of your data, mirroring what it looks like on a disk. When the data changes in memory, it’s called a dirty page. Dirty pages must be flushed to the disk to save the changes. When your database is larger than your available memory, that puts pressure on the buffer pool, and clean pages might be dropped. When the clean pages are dropped, the system must read from the disk the next time it accesses the dropped data.

The buffer pool extension feature lets you push clean pages to a local SSD, instead of dropping them. This works along the same lines as virtual memory, which is to say, by swapping and giving you access to the clean pages on the local SSD, which is faster than going to the regular disk to fetch the data.

This technique is not nearly as fast as having enough memory, but it can give you a modest increase in throughput when your available memory is low.

SQL Server Database Consulting Services

Ready to future-proof your SQL Server investment?

Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

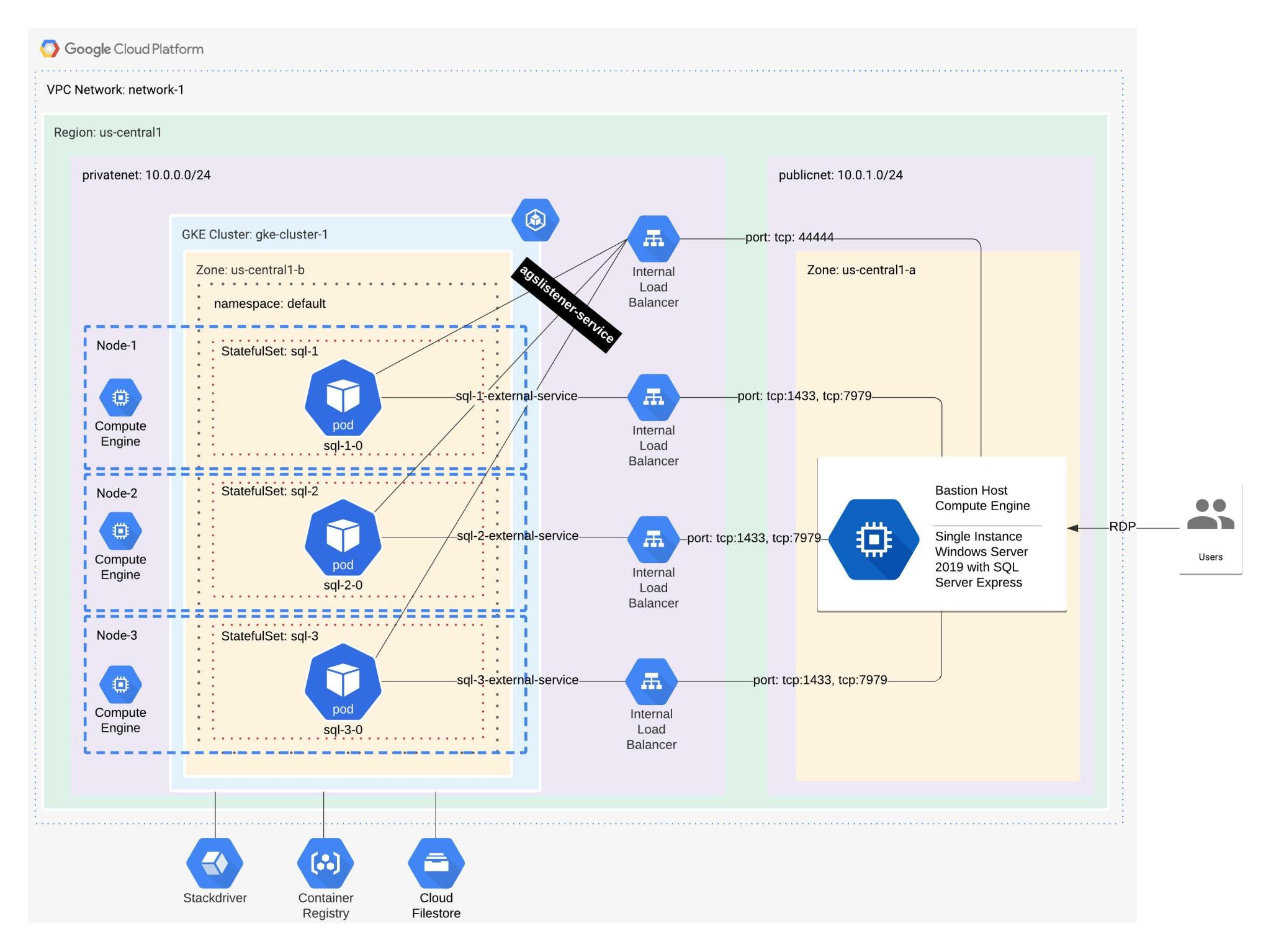

SQL Server AlwaysOn Availability Groups on Google Kubernetes Engine

SQL Server on Linux – Configuration (Ubuntu)

How To Export Collections in SCCM to CSV Using Powershell

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.