GoldenGate 12.2 big data adapters: part 4 - HBASE

This is the next post in my series about Oracle GoldenGate Big Data adapters.

Here is list of all posts in the series:

- GoldenGate 12.2 Big Data Adapters: part 1 - HDFS

- GoldenGate 12.2 Big Data Adapters: part 2 - Flume

- GoldenGate 12.2 Big Data Adapters: part 3 - Kafka

- GoldenGate 12.2 Big Data Adapters: part 4 - HBASE

In this post, I am going to explore the HBASE adapter for GoldenGate. Let's start by recalling what we know about HBASE. The Apache HBASE is a non-relational, distributed database modeled after Google's Bigtable. It provides read/write access to data and is based on top of Hadoop or HDFS.

What does this tell us? First, we can write and change the data. Second, we need to remember that it is a non-relational database, which is a different approach compared to traditional relational databases. You can think of HBase as a key-value store. We are not going deep into HBASE architecture here, as our main task is to test the Oracle GoldenGate adapter.

Target Environment Setup and Version Compatibility

Our configuration has an Oracle database as a source with a GoldenGate extract and a target system with Oracle GoldenGate for Big Data. The source-side replication is already configured. We have an initial trail file for data initialization and trails for ongoing replication, capturing changes for all tables in the ggtest schema.

Now we need to prepare our target site. I used a pseudo-distributed mode for my tests, running a fully-distributed mode on a single host. While not acceptable for production, it suffices for our tests. On the same box, I have HDFS to serve as main storage.

Oracle documentation states they support HBase from version 1.0.x. In my first attempt, I tried HBase version 1.0.0 (Cloudera 5.6), but it failed

2016-03-29 11:51:31 ERROR OGG-15051 Oracle GoldenGate Delivery, irhbase.prm: Java or JNI exception: java.lang.NoSuchMethodError: org.apache.hadoop.hbase.HTableDescriptor.addFamily(Lorg/apache/hadoop/hbase/HColumnDescriptor;)Lorg/apache/hadoop/hbase/HTableDescriptor;. 2016-03-29 11:51:31 ERROR OGG-01668 Oracle GoldenGate Delivery, irhbase.prm: PROCESS ABENDING. I installed HBase version 1.1.4, and it worked perfectly. Here is the pseudo-distributed configuration

<configuration> <property> <name>hbase.cluster.distributed</name> <value>true</value> </property> <property> <name>hbase.rootdir</name> <value>hdfs://localhost:8020/user/oracle/hbase</value> </property> </configuration> Configuring HBase Properties and Initial Load Replicat

With HBase running, we switch to GoldenGate. We need to copy hbase.conf from $OGG_HOME/AdapterExamples/big-data/hbase to $OGG_HOME/dirprm. I modified the gg.classpath parameter to point to my HBase configuration files and libraries.

Sample hbase.props configuration

gg.handlerlist=hbase gg.handler.hbase.type=hbase gg.handler.hbase.hBaseColumnFamilyName=cf gg.handler.hbase.keyValueDelimiter=CDATA[=] gg.handler.hbase.keyValuePairDelimiter=CDATA[,] gg.handler.hbase.encoding=UTF-8 gg.handler.hbase.pkUpdateHandling=abend gg.handler.hbase.nullValueRepresentation=CDATA[NULL] gg.handler.hbase.authType=none gg.handler.hbase.includeTokens=false gg.handler.hbase.mode=tx goldengate.userexit.timestamp=utc goldengate.userexit.writers=javawriter javawriter.stats.display=TRUE javawriter.stats.full=TRUE gg.log=log4j gg.log.level=INFO gg.report.time=30sec gg.classpath=/u01/hbase/lib/*:/u01/hbase/conf:/usr/lib/hadoop/client/* javawriter.bootoptions=-Xmx512m -Xms32m -Djava.class.path=ggjava/ggjava.jar Next, I prepared a parameter file for the initial load (irhbase.prm) using the SPECIALRUN and EXTFILE parameters to map GGTEST.* to BDTEST.*.

Executing the Initial Load and Verifying HBase Table Structure

We ran the replicat in passive mode from the command line.

Before running, HBase was empty. After the process:

hbase(main):002:0> list TABLE BDTEST:TEST_TAB_1 BDTEST:TEST_TAB_2 2 row(s) in 0.3680 seconds Scanning the tables shows that the structure and columns (mapped into the cf column family) are as expected:

hbase(main):005:0> scan 'BDTEST:TEST_TAB_1' ROW COLUMN+CELL 1 column=cf:ACC_DATE, timestamp=1459269153102, value=2014-01-22:12:14:30 1 column=cf:PK_ID, timestamp=1459269153102, value=1 ... Setting Up Continuous Data Replication

I created rhbase.prm for ongoing replication. By default, a replicat looks for a trail starting at sequence 0, but since I have a purging policy, I needed to specify the exact sequence to start from.

GGSCI (sandbox.localdomain) 2> add replicat rhbase, exttrail dirdat/or,EXTSEQNO 45 REPLICAT added. GGSCI (sandbox.localdomain) 3> start replicat rhbase I inserted rows on the Oracle side, and all were successfully replicated to HBase, maintaining a minimal replication lag.

Managing Row Identifiers and Constraints in HBase

Interestingly, the row identifier for test_tab_1 is the pk_id value, while for test_tab_2, it is a concatenation of all column values. This happens because test_tab_2 lacked a primary key.

When I added a primary key constraint to test_tab_2 on the source, the row ID in HBase changed on the fly:

alter table ggtest.test_tab_2 add constraint pk_test_tab_2 primary key (pk_id); HBase dynamically adjusted the row ID column for newly inserted records. This also works with unique indexes. If we need a specific key for a table in HBase, we must have at least a unique constraint on the source.

Handling DDL Changes and Data Synchronization Challenges

If we change a table structure (e.g., dropping a column), it affects newly inserted rows but will not change existing rows, even if updated. This is because HBase preserves the columns for older versions of the row.

Note on Truncate: A TRUNCATE on the source table is not replicated to the target. You must truncate the table in HBase manually. If you insert data after a source truncate, HBase will perform what looks like a "merge" operation rather than a fresh start.

Performance Observations and Final Summary

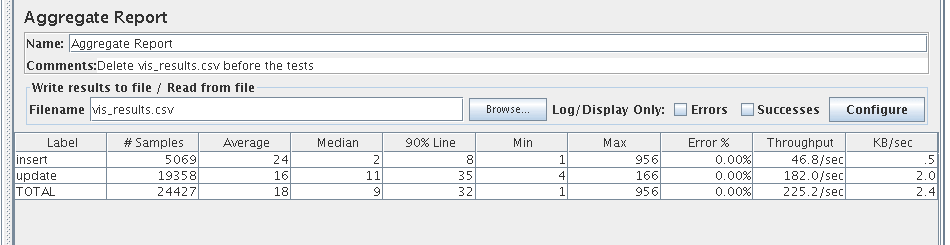

I tested performance and found that my Oracle source was the main bottleneck rather than GoldenGate or HBase. I sustained transaction rates up to 60 DML per second before the Oracle DB struggled with commit waits. HBase and the replicat handled this workload, including a test transaction of 2 billion rows, without issue.

I noticed a minor typo in the Oracle documentation where keyValuePairDelimiter was replaced by keyValueDelimiter in one section, but the functionality remains robust.

Overall, the Oracle GoldenGate for Big Data adapters for HBase are mature and ready for production workloads. I am looking forward to using this in real-world environments with significant workloads. Stay tuned for future posts on different DDL operations and data types.

Oracle Database Consulting Services

Ready to optimize your Oracle Database for the future?

Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

GoldenGate 12.2 big data adapters: part 5 - MongoDB

GoldenGate 12.2 big data adapters: part 2 - FLUME

GoldenGate 12.2 big data adapters: part 1 - HDFS

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.