Part Three: Deploying High Available Applications in Oracle Cloud Infrastructure – Instance Clone and Linux Storage Appliance

This is the third in a series that covers the details to deploy a high-available setup of Oracle Enterprise Manager 13.5 using Oracle Cloud Infrastructure‘s resources.

Recap

This series demonstrates how to deploy a high-available installation of OEM 13.5 using OCI’s services.

This setup will comprise the following resources:

- A two-node RAC VM database—covered in the first post

- A data guard standby DB

- Two application hosts—partially covered in the second post

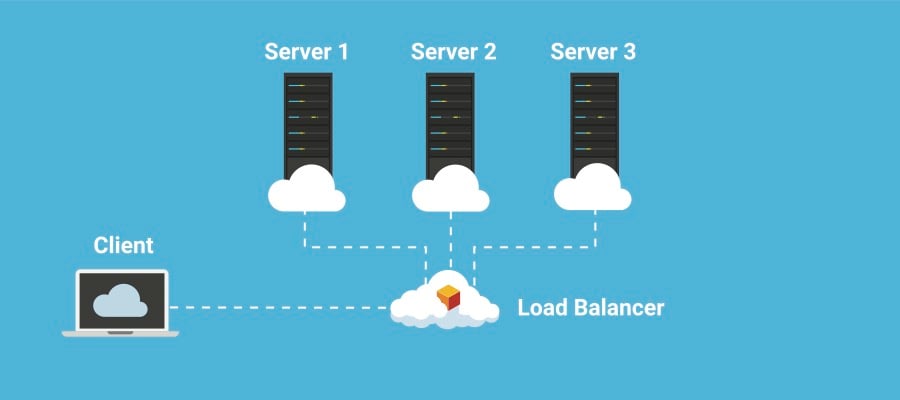

- A load balancer

- Shared storage area

The second post of this series demonstrated the setup of the first application machine, of which we’ll clone and create a second instance.

This post will show how to launch the second virtual machine using OCI’s Cloud Shell and, also, how to deploy a Linux storage appliance to host the shared storage area. Level 3 of high availability for OEM requires a standby database, so this post also includes some details on how to set that up.

Create a custom instance image

Now that you’ve set up your first virtual machine (VM) to be used as an application instance for Oracle Enterprise Manager, it’s time to create a custom image based on that machine.

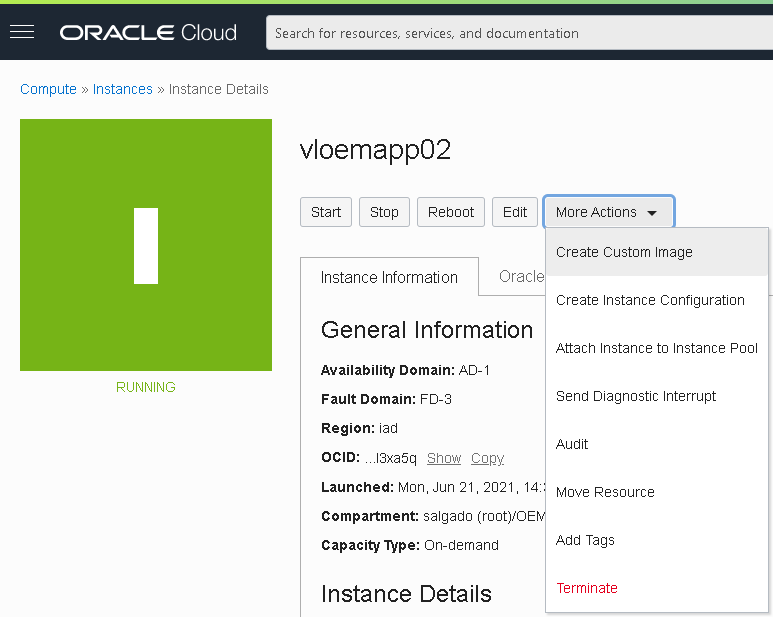

- In the “Compute Instance” section, select the first VM we created. Go into the details and select “Create Custom Image” from the “More actions” menu:

Note: The instance will become unavailable while the image is being created.

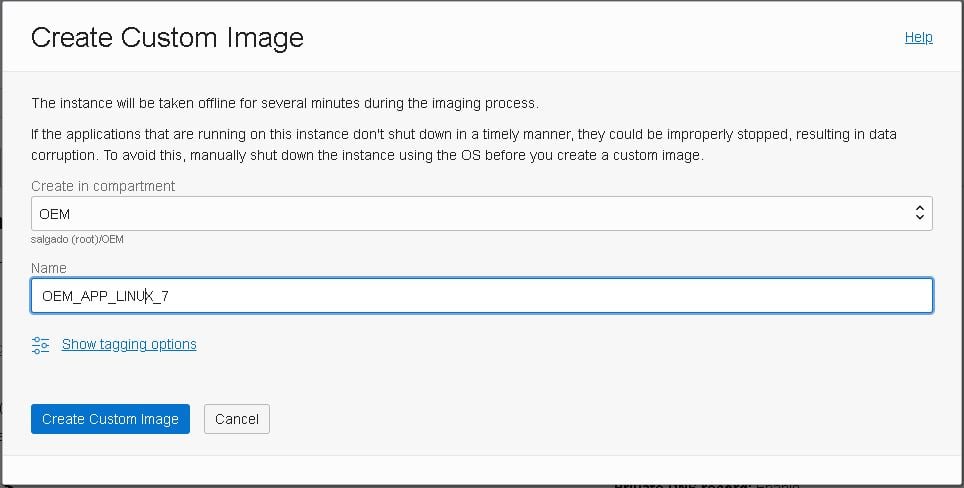

- Next, provide a name for your Custom OS image and click “Create Custom Image”:

Now that we have an OS image based on the first application machine, let’s launch our second instance using this image.

Create the second instance

We’ll first do this using the console:

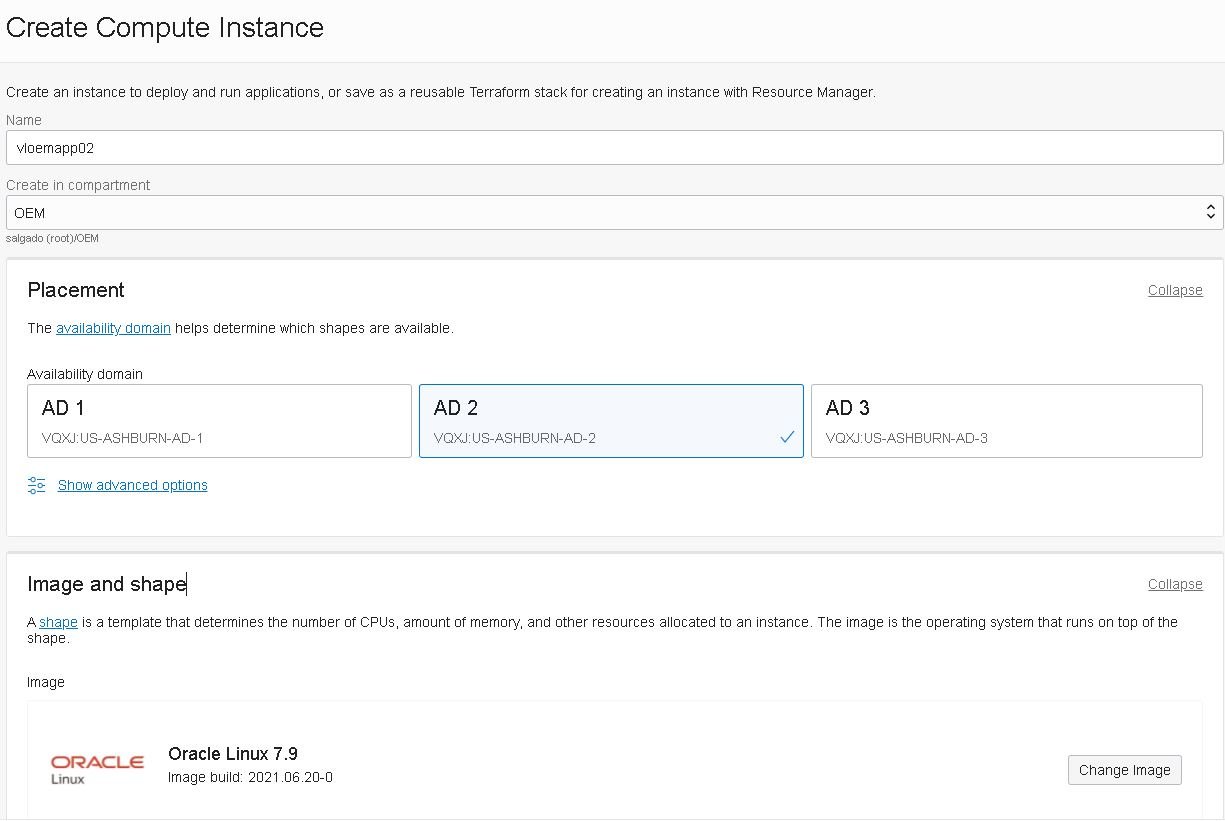

- In the “Compute Instances” section, select “Create Instance.”

- Type in the instance name: “vloemapp02”, then select the compartment and a different Availability domain than the one used for the first instance, then click the “Change Image” button:

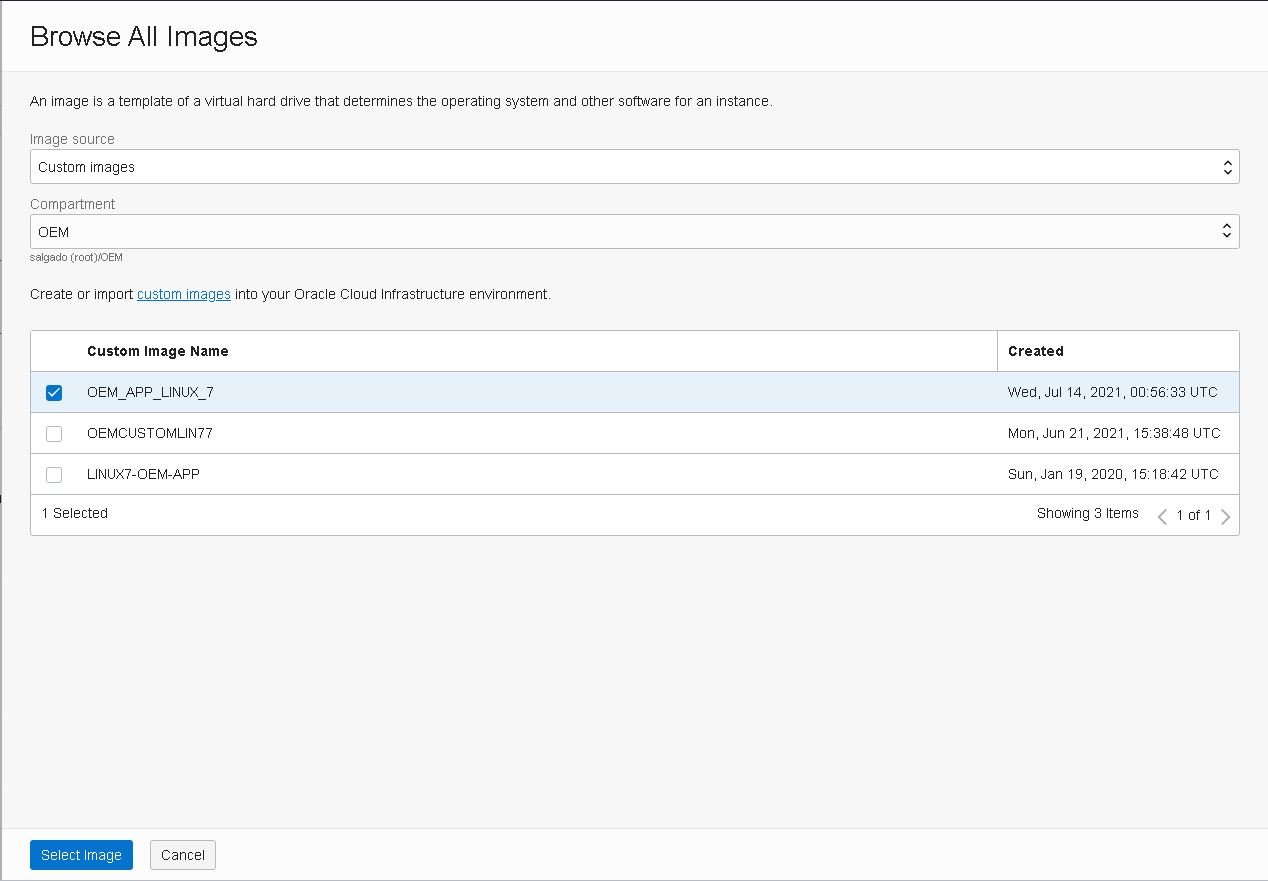

- Select the image that we just created and click “Select image”:

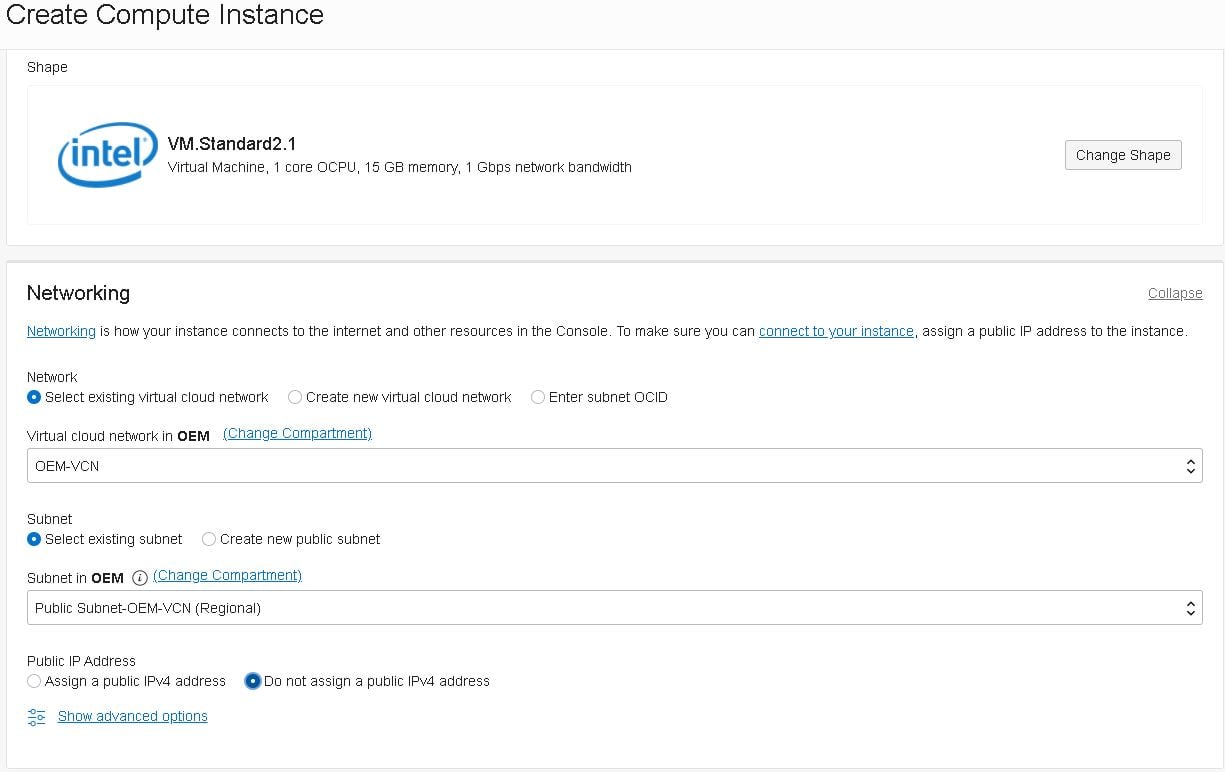

- Select the public subnet for this instance but do not assign a public IP at this time:

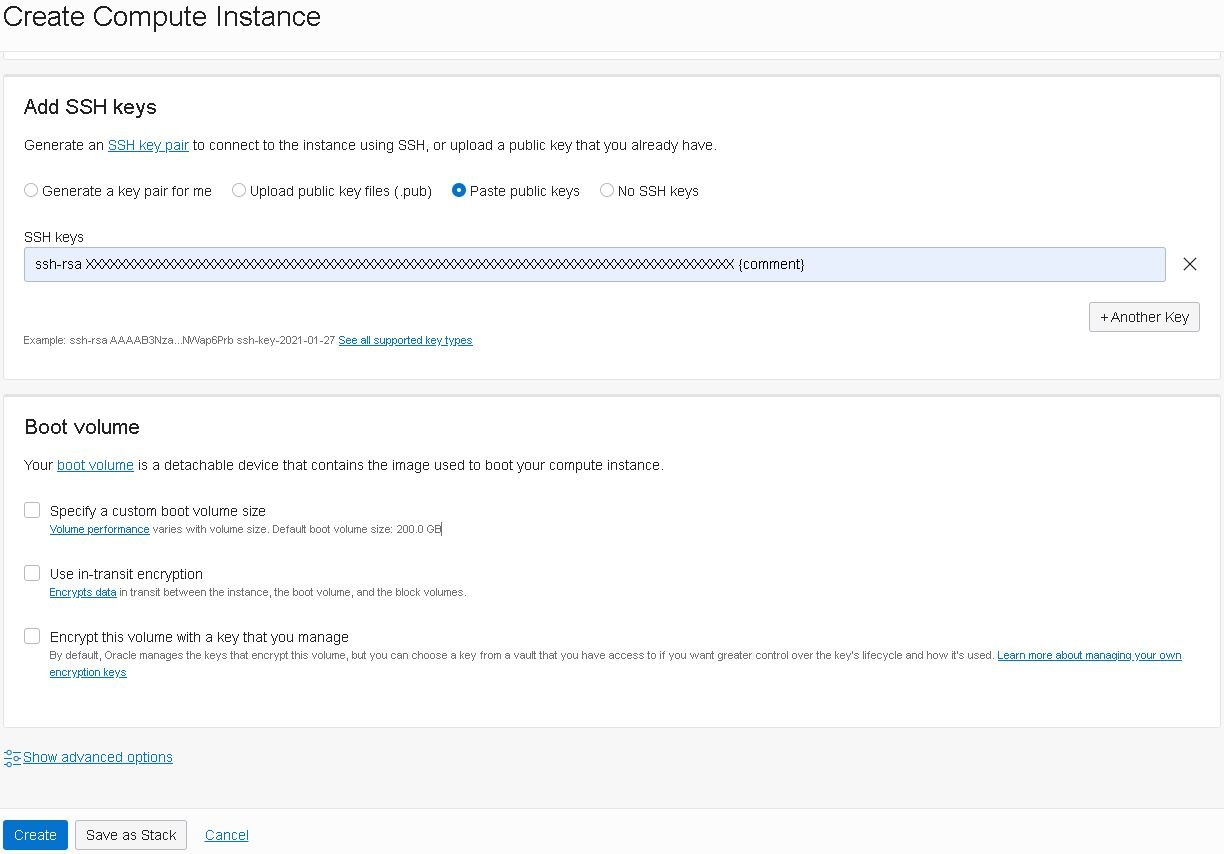

- Enter your single-line ssh-rsa key, as shown below, or upload / create your key pair file, then click “Create”:

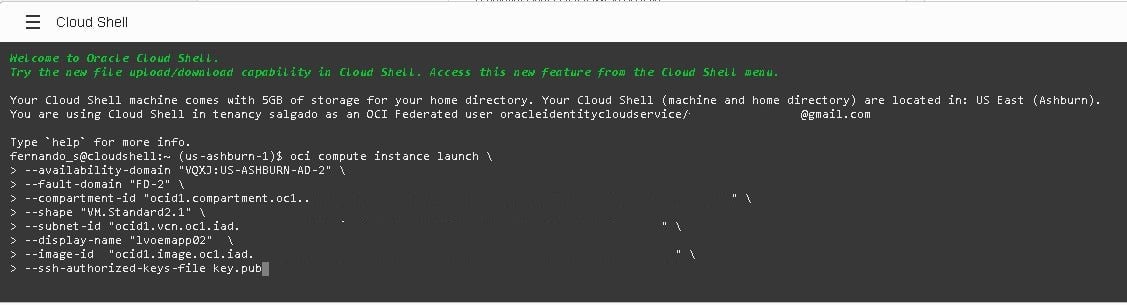

If you prefer, you can also create the instance using OCI’s Console shell interface:

- Click the fifth icon from right to left (the one with the symbols “>_”) in the top right corner:

The Cloud Shell will open on the bottom of the page. From there, you can just type in the “oci compute instance launch” command as below:

oci compute instance launch \ --availability-domain "VQXJ:US-ASHBURN-AD-2" \ --fault-domain "FD-2" \ --compartment-id "ocid1.compartment.oc1..**************************************************" \ --shape "VM.Standard2.1" \ --subnet-id "ocid1.vcn.oc1.iad.**************************************************" \ --display-name "lvoemapp02" \ --image-id "ocid1.image.oc1.iad.**************************************************" \ --ssh-authorized-keys-file key.pub

Now that the new instance is created, you’ll have to assign a public IP address the same way we did on the second post. Note: For a live application, you should not assign a public IP to the application machines; instead, you should use a bastion host. You should also attach a block volume to this instance and create a 32GB Swap File, the same way we did in the second post.

Shared storage server

To have two instances of the Oracle Management Service in active-active mode, we’ll need a shared storage area, so we’ll launch a small VM with a block volume shared between the application servers.

- Oracle offers some out-of-the box solutions through their Marketplace, which you can access through the top-left menu:

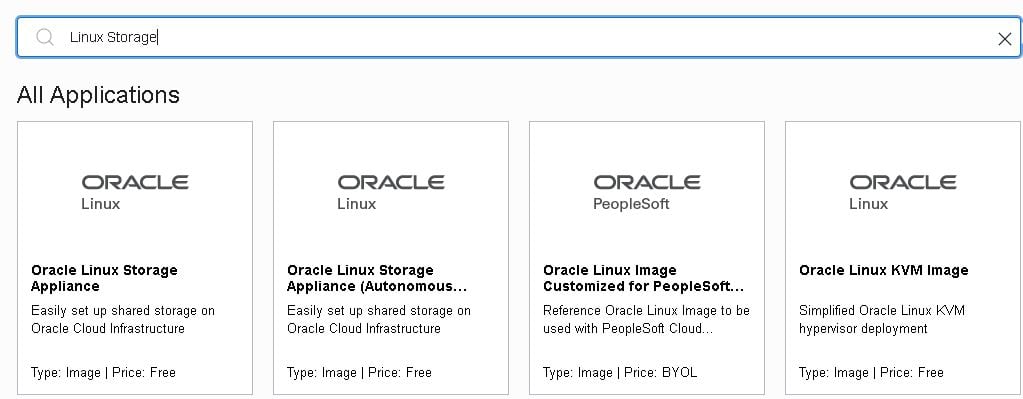

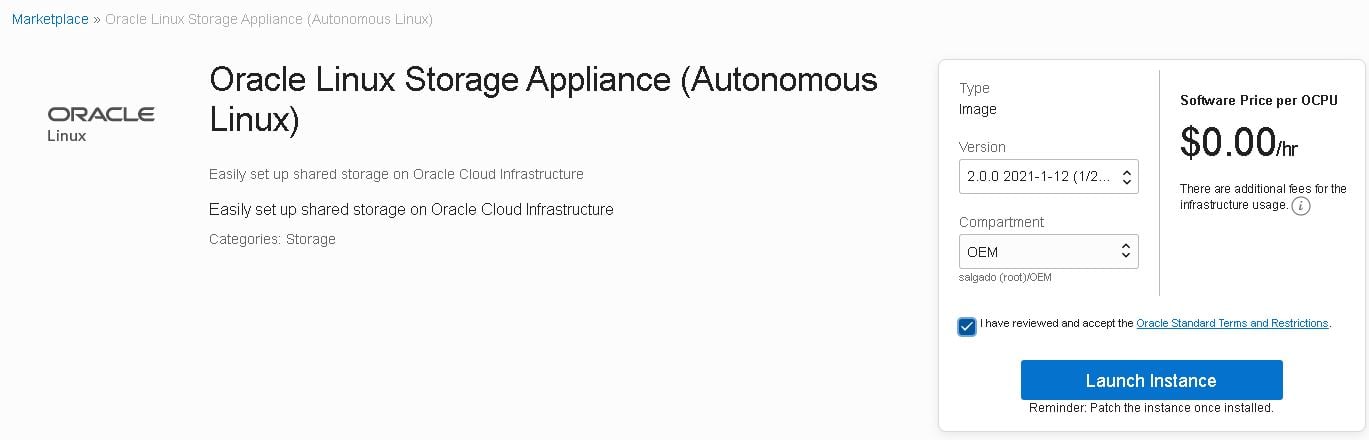

- We’ll launch a new Linux storage appliance, named “vloemsto01”, so search for “Linux Storage Appliance (Autonomous Linux)” and select the appliance below:

- Then accept the terms and launch the instance.

Your new storage appliance will show up as a regular compute instance, so now we’ll need to attach a block volume to it. You can follow the same steps we used in the second post.

Once the new block volume is attached, we should create and share a new file system so we can mount it on both application servers.

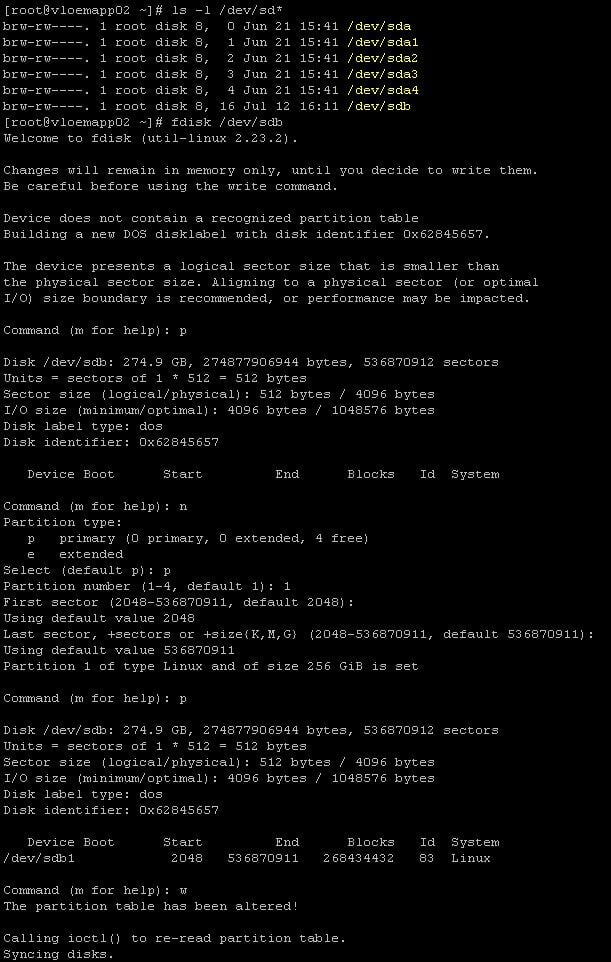

- First, create a new partition using the “fdisk” utility:

Create and mount the new file system, using the block volume attached previously:

[root@vloemsto01 ~]# sudo mkfs.ext4 /dev/sdb1 [root@vloemsto01 ~]# mkdir /oracle [root@vloemsto01 ~]# mount /dev/sdb1 /oracle

Create a shared file system:

[root@vloemsto01 ~]# echo "/oracle vloemapp01(rw) vloemapp02(rw)" >> /etc/exports [root@vloemsto01 ~]# exportfs -r

Mount the shared file system on both application servers (vloemapp01 and vloemapp02), using the commands below:

[root@vloemapp01 ~]# mkdir -p /u01/app/oracle/oms_shared_fs [root@vloemapp01 ~]# chown -R oracle:oinstall /u01/app/oracle/oms_shared_fs [root@vloemapp01 ~]# mount -t nfs vloemsto01:/oracle /u01/app/oracle/oms_shared_fs [root@vloemapp01 ~]# echo "vloemsto01:/oracle /u01/app/oracle/oms_shared_fs nfs defaults 0 0" >> vi /etc/fstab [root@vloemapp02 ~]# mkdir -p /u01/app/oracle/oms_shared_fs [root@vloemapp02 ~]# chown -R oracle:oinstall /u01/app/oracle/oms_shared_fs [root@vloemapp02 ~]# mount -t nfs vloemsto01:/oracle /u01/app/oracle/oms_shared_fs [root@vloemapp02 ~]# echo "vloemsto01:/oracle /u01/app/oracle/oms_shared_fs nfs defaults 0 0" >> vi /etc/fstab

Standby Database

To achieve Level 3 of high availability for OEM 13.5, we need to make sure that the database has a disaster recovery solution using Oracle‘s Data Guard.

This is a widely known procedure and, honestly, is not within the scope of this series, but you can reference a generic procedure showing how to create a standby database, or you can look at one specific procedure on how to use Oracle Data Guard on OCI.

Creating a Physical Standby with RMAN Active Duplicate in 11.2.0.3

Thank you for reading! Please share your comments below.

Oracle Database Consulting Services

Ready to optimize your Oracle Database for the future?

Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

Part Four: Deploying High Availability Applications in OCI—OEM 13.5 Setup

Part Six: Deploying High Availability Applications in Oracle Cloud Infrastructure

Part Five: Deploying High Availability Applications in Oracle Cloud Infrastructure— SSL Certificates and Load Balancer Setup

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.