Making it easier to graph your infrastructure’s performance data

- Dashboard search

- Templated dashboards

- Save / load from Elasticsearch and / or JSON file

- Quickly add functions (search, typeahead)

- Direct link to Graphite function documentation

- Graph annotation

- Multiple Graphite or InfluxDB data sources

- Ability to switch between data sources

- Show graphs from different data sources on the same dashboard

class { 'graphite':

gr_web_server => 'none',

gr_web_cors_allow_from_all => true,

}

Since my module does not manage Apache (or Nginx), it is necessary to add something like the following to your node’s manifest to create a virtual host for Grafana:

# Grafana is to be served by Apache

class { 'apache':

default_vhost => false,

}

# Create Apache virtual host

apache::vhost { 'grafana.example.com':

servername => 'grafana.example.com',

port => 80,

docroot => '/opt/grafana',

error_log_file => 'grafana-error.log',

access_log_file => 'grafana-access.log',

directories => [

{

path => '/opt/grafana',

options => [ 'None' ],

allow => 'from All',

allow_override => [ 'None' ],

order => 'Allow,Deny',

}

]

}

And the Grafana declaration itself:

class { 'grafana':

elasticsearch_host => 'elasticsearch.example.com',

graphite_host => 'graphite.example.com',

}

Now that my module was working, it was time to publish it to the Puppet Forge. I converted my

Modulefile to

metadata.json, added a

.travis.yml file to my repository and enabled integration with

Travis CI, built the module and uploaded it to the Forge. Since its initial release, I have updated the module to deploy Grafana version 1.6.1 by default, including updating the content of the

config.js ERB template, and have added support for InfluxDB. I am pretty happy with the module and hope that you find it useful. I do have plans to add more capabilities to the module, including support of more of Grafana’s configuration file settings, having the module manage the web server’s configuration similar to how Daniel’s module does it, and adding a stronger test suite so I can ensure compatibility with more operating systems and Ruby / Puppet combinations. I welcome any questions, suggestions,

bug reports and / or pull requests you may have. Thanks for your time and interest! Project page:

https://github.com/bfraser/puppet-grafana Puppet Forge URL:

https://forge.puppetlabs.com/bfraser/grafana

On this page

Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

Monitoring Cassandra with Grafana and Influx DB

![]()

Monitoring Cassandra with Grafana and Influx DB

Mar 16, 2015 12:00:00 AM

4

min read

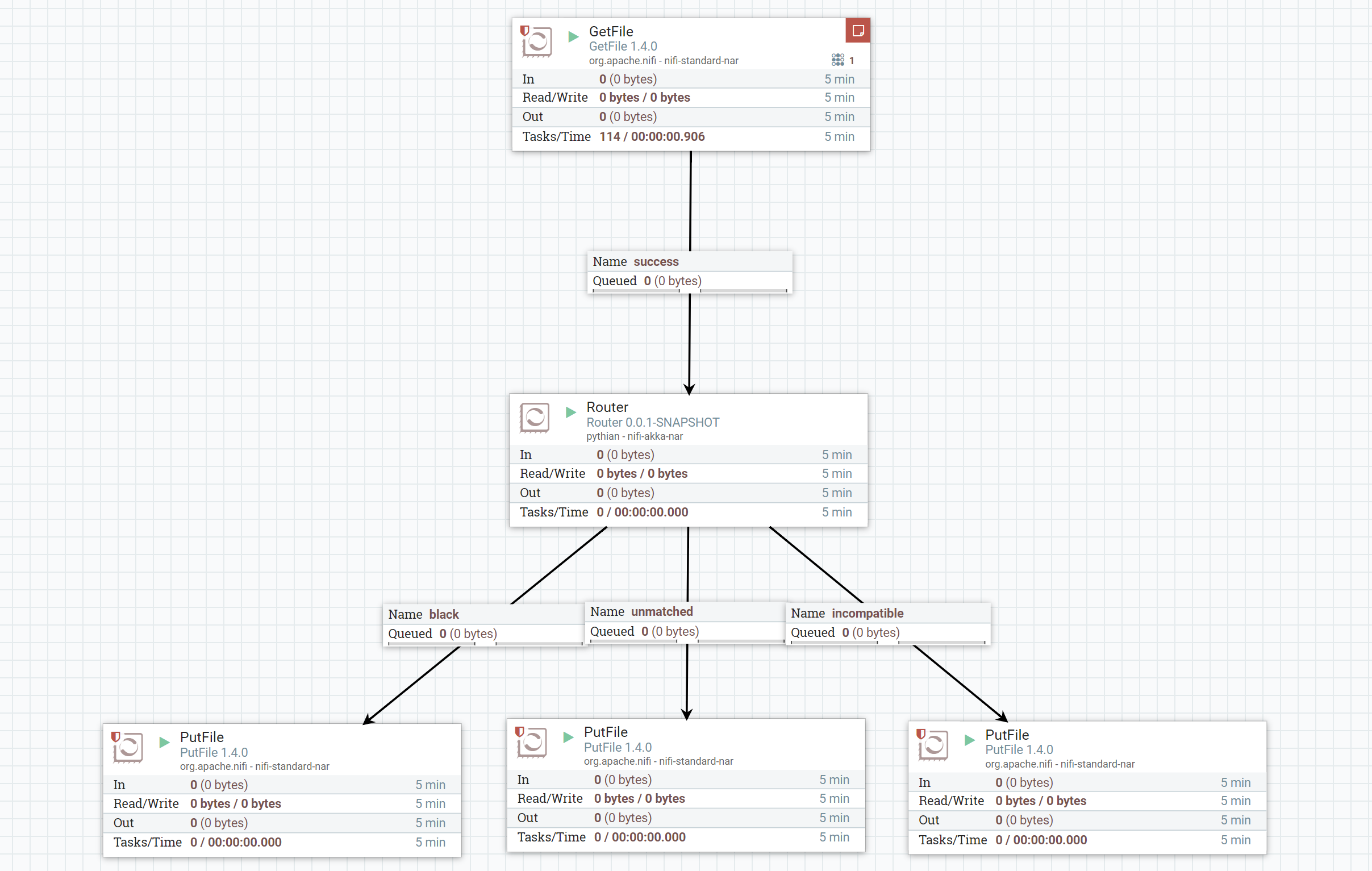

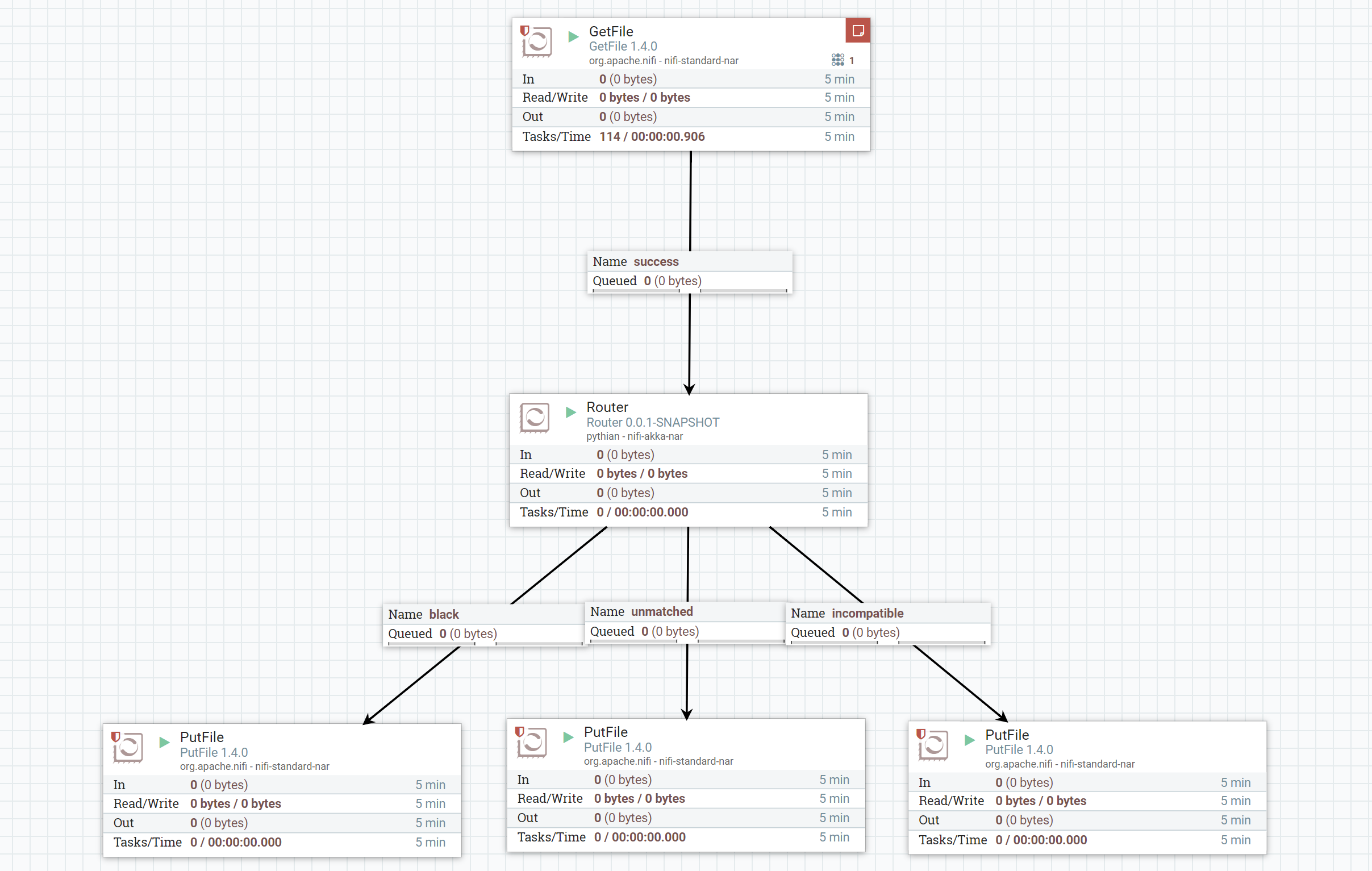

Building a custom routing NiFi processor with Scala

Building a custom routing NiFi processor with Scala

Jan 4, 2018 12:00:00 AM

4

min read

Extend Oracle Enterprise Manager (OEM) Compatibility with Proxy Monitoring

Extend Oracle Enterprise Manager (OEM) Compatibility with Proxy Monitoring

Jul 6, 2023 12:00:00 AM

14

min read

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.