Change Your system_auth Replication Factor in Cassandra

Occasionally, clients reach out to us with authentication issues when a node is down. While this scenario shouldn’t happen in a high availability database management system (DBMS), it can if you miss a couple of very relevant lines in the Apache Cassandra documentation. I’ll explain why and how to permanently avoid it.

System keyspaces and Replication Strategy

Depending on the version, the Apache distributions of Cassandra have seven out-of-the-box system keyspaces using various replication strategies:

| Keyspace | Replication Strategy | Added in version |

| system | {‘class’: ‘LocalStrategy’} | Native |

| system_traces | {‘class’: ‘SimpleStrategy’, ‘replication_factor’: ‘2’} | 1.2 |

| system_auth | {‘class’: ‘SimpleStrategy’, ‘replication_factor’: ‘1’} | 2.2 |

| system_distributed | {‘class’: ‘SimpleStrategy’, ‘replication_factor’: ‘3’} | 2.2 |

| system_schema | {‘class’: ‘LocalStrategy’} | 3.0 |

| system_views | none | 4.0 |

| system_virtual_schema | none | 4.0 |

I won’t dive into what these do, but each keyspace is responsible for a specific set of metadata tables which store information, most of which is vital for most of what Cassandra does under the hood.

Some of these keyspaces use LocalStrategy, meaning that they store metadata locally, as opposed to in a distributed fashion.

The keyspaces system_views, and system_virtual_schema are virtual keyspaces introduced in Apache Cassandra 4.0. These don’t store data in tables using sstables in the same way standard keyspaces do, instead they fetch data from sources such as Java MBeans or configuration files, so they don’t need a replication strategy. Virtual tables can nonetheless be queried as regular Cassandra tables.

Finally, some system keyspaces like system_auth use SimpleStrategy, because they store data meant to be replicated on the cluster. Using SimpleStrategy isn’t desirable if we want to benefit from our set-up logical topology, but this is the default since it’s generic enough to fit all topology configurations. Consider changing the replication strategy in those to NetworkTopologyStrategy before you start using your cluster (and setting up authentication and authorization).

The system_auth keyspace

Among the keyspaces above is the system_auth keyspace. It was introduced in Apache Cassandra 2.2 to add support for role-based access control (RBAC).

Not only is system_auth responsible for storing metadata on created roles and access profiles, but also for storing credentials for Cassandra used in CQL shell (cqlsh) and client authentication. And here lies the issue: it uses replication factor (RF) 1.

What this premise implies is that if you’re using authentication, as we advise, you have a single point of failure in Cassandra. And that is a no-no.

A common misconception is that with RF 1 one node will be responsible for all the authentication data in the cluster. That’s not quite true. Having RF 1 means that the whole authentication metadata set won’t be replicated. It will be, however, split among the nodes in the cluster.

The bottom line is: if a node crashes or becomes unavailable, a fraction of the authentication metadata will very likely also become unavailable, translating into blocked access.

To avoid this scenario, we need to change the RF. If you’re already using authentication/RBAC, you’ll also need to repair the system_auth keyspace.

Changing the replication strategy

The best course of action will highly depend on which stage of the cluster you’re in and whether and how you’ve configured authentication.

I’ll follow with three different procedures, each for a specific scenario.

No authentication set up yet

If you’re not yet using authentication, it’s pretty straightforward. Log into cqlsh and run:

ALTER KEYSPACE system_auth WITH replication = {'class': 'NetworkTopologyStrategy', '<datacenter_name>': 3 };

You should also include other datacenters if you’re running a multi-datacenter cluster.

Cassandra authentication already set up

If you’re already using Cassandra’s authentication, you can run the previous command to alter the replication strategy, but bear in mind that authentication will be temporarily unavailable on most of the nodes until you run on all nodes:

nodetool repair -pr system_auth

This will spread the authentication and RBAC data across the cluster to the newly assigned replicas.

Alternatively, if temporary authentication unavailability is a show stopper, you can follow a different procedure:

- Reset the Cassandra authenticator on all nodes in cassandra.yaml (to AllowAllAuthenticator) and authorizer (to AllowAllAuthorizer).

- Run a rolling restart.

- Change the replication strategy.

- Issue the repairs using the command above.

- Restore the original authenticator and authorizer in cassandra.yaml.

- Run a final rolling restart to bring back RBAC.

Cassandra and JMX integrated authentication already in place

Additionally, if you’re using Cassandra integrated authentication with JMX, you may not be able to issue repairs after changing the RF since your nodetool authentication depends on the system_auth data. If that’s the case, we recommend disabling JMX authentication first by editing in cassandra-env.sh:

JVM_OPTS="$JVM_OPTS -Dcom.sun.management.jmxremote.authenticate=false"

Then, run a rolling restart on the cluster, proceeding to the replication strategy change and repair on all nodes as exemplified in the scenarios above. After the repairs are complete, re-enable JMX authentication:

JVM_OPTS="$JVM_OPTS -Dcom.sun.management.jmxremote.authenticate=true"

Finally, run a rolling restart to refresh JMX settings and you’re set.

If temporary Cassandra unavailability for cqlsh and clients isn’t an option, you can, instead, reset the Cassandra authenticator and authorizer as instructed in the previous scenario and not disable JMX authentication.

FAQs

Which RF should I change it to?

We recommend using NetworkTopologyStrategy with RF 3 or 5. You can increase the RF further if you want to increase availability, but bear in mind that system_auth is read using QUORUM when authenticating the default superuser (cassandra), so the cost of this authentication will scale up with the RF. To the same degree, all changes to RBAC will be more demanding on coordinators in proportion to the keyspace RF.

I changed the RF but now I can’t authenticate to my cluster. Did I lose my authentication data?

You didn’t. The number of replicas responsible for every set of authentication metadata increased, but the metadata itself was not streamed to new replicas (only the original one has it). Running a repair for the system_auth keyspace will fix this. You can run the following command on all nodes in the cluster:

nodetool repair -pr system_auth

And you’re good to go. Your authentication data is now fully replicated and highly available.

But I want to have RF match the number of nodes so I can authenticate from any node. Why only three?

Having RF 3 doesn’t mean that you can only authenticate from 3 nodes, it means that the whole authentication metadata set is split among all nodes, thrice over. You don’t need to authenticate from the node which stores your authentication metadata to be able to log in. As with any query, the node you’re directly accessing will play the role of a coordinator and ask the right replicas for the authentication data.

Since we query this keyspace with QUORUM for the default superuser and LOCAL_ONE for the remaining users, we need at least two nodes down to have authentication issues for the default superuser and three for other users. If your RF is seven, for example, then you need to have at least four nodes down to bump into “cassandra” user authentication roadblocks.

Disclaimer: This only applies up to and including Cassandra 3.11.

This is the default replication for some reason. Isn’t it unsafe to change it?

I can’t tell you why this is the default RF but if anything, it’s unsafe to keep it. Having RF 1 makes sense when your cluster has only one node. Although if your cluster only has one node, you’ll need to reconsider whether Cassandra is the right fit for you because you’re not benefiting from high availability.

I hope you find this post helpful. Feel free to drop any questions in the comments and don’t forget to sign up for the next post.

Cassandra Database Consulting

Ready to handle massive data volumes with zero downtime?

Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

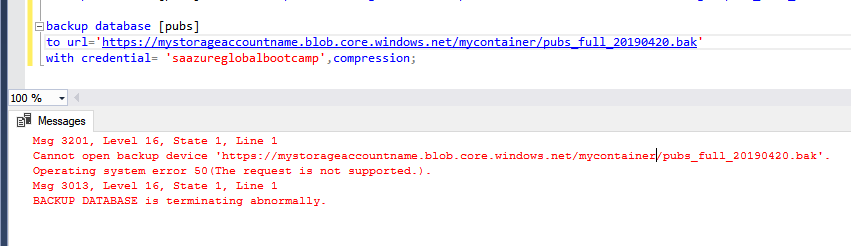

How to fix SQL backup to URL failure - operating system error 50

SAP on MSSQL: An 8-Step Homogeneous System Copy Approach

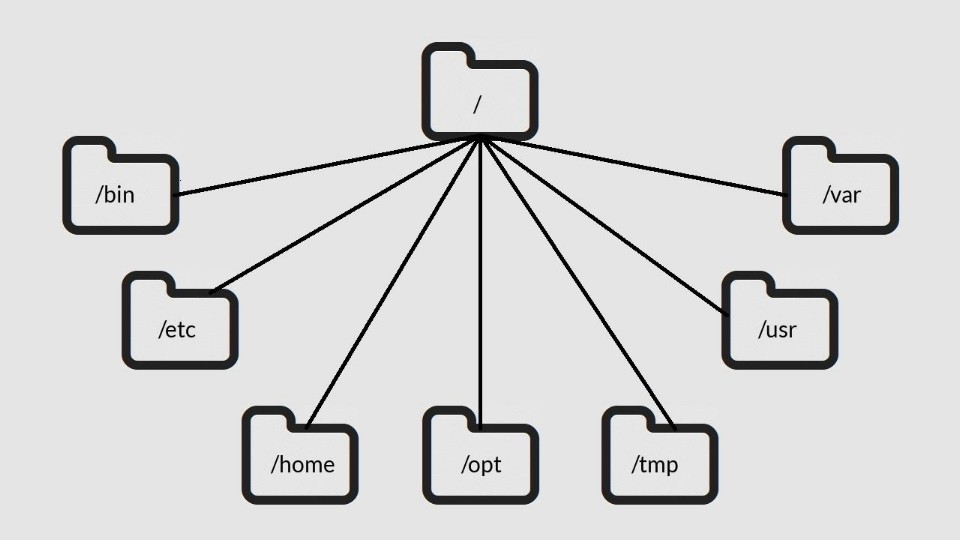

Linux file system for SQL Server DBAs

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.