First touch penalty on AWS cloud

CREATE TABLE test.testtab02 AS

SELECT LEVEL AS id,

dbms_random.String('x', 8) AS rnd_str_1,

SYSDATE - ( LEVEL + dbms_random.Value(0, 1000) ) AS use_date,

dbms_random.String('x', 8) AS rnd_str_2,

SYSDATE - ( LEVEL + dbms_random.Value(0, 1000) ) AS acc_date

FROM dual

CONNECT BY LEVEL < 1

/

INSERT /*+ append */ INTO test.testtab02

WITH v1

AS (SELECT dbms_random.String('x', 8) AS rnd_str_1,

SYSDATE - ( LEVEL + dbms_random.Value(0, 1000) ) AS use_date

FROM dual

CONNECT BY LEVEL < 10000),

v2

AS (SELECT dbms_random.String('x', 8) AS rnd_str_2,

SYSDATE - ( LEVEL + dbms_random.Value(0, 1000) ) AS acc_date

FROM dual

CONNECT BY LEVEL < 10000)

SELECT ROWNUM AS id,

v1.rnd_str_1,

v1.use_date,

v2.rnd_str_2,

v2.acc_date

FROM v1,

v2

/

The table is simple and about 4Gb in size without any indexes. To eliminate potential impact of network and other factors I've used "select count(*)". After creating and filling the table with data I took a snapshot from my RDS instance and restored it to another RDS instance. All the tests have been done on the latest RDS Oracle 12.1.0.2 v11 EE on db.t2.medium instance. Now we can look at the query and the results.

orcl> set timing on

orcl> set autotrace traceonly

orcl> select count(*) from TESTTAB02;

Elapsed: 00:04:43.56

Execution Plan

----------------------------------------------------------

Plan hash value: 3686556234

------------------------------------------------------------------------

| Id | Operation | Name | Rows | Cost (%CPU)| Time |

------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | 3 (0)| 00:00:01 |

| 1 | SORT AGGREGATE | | 1 | | |

| 2 | TABLE ACCESS FULL| TESTTAB02 | 1 | 3 (0)| 00:00:01 |

------------------------------------------------------------------------

Statistics

----------------------------------------------------------

26 recursive calls

0 db block gets

630844 consistent gets

630808 physical reads

0 redo size

530 bytes sent via SQL*Net to client

511 bytes received via SQL*Net from client

2 SQL*Net roundtrips to/from client

5 sorts (memory)

0 sorts (disk)

1 rows processed

orcl>

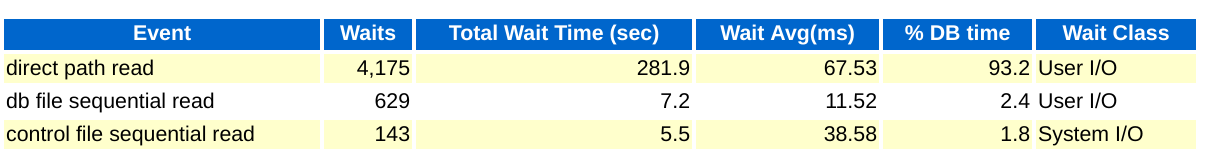

And here is an excerpt from an AWR report for the run:

We had 630808 physical reads per run and it took about four minutes and 44 seconds to complete. Oracle has chosen direct path read access to get the data in our case and it looked the same from AWR data. Now we can repeat our query and compare timings and numbers for the wait events. Since we've read all the blocks the impact from the "first touch" should be eliminated. To be on the safe side the query is going to be repeated after the instance restart. And here is the same query executed after the reboot.

We had 630808 physical reads per run and it took about four minutes and 44 seconds to complete. Oracle has chosen direct path read access to get the data in our case and it looked the same from AWR data. Now we can repeat our query and compare timings and numbers for the wait events. Since we've read all the blocks the impact from the "first touch" should be eliminated. To be on the safe side the query is going to be repeated after the instance restart. And here is the same query executed after the reboot.

orcl> set timing on

orcl> set autotrace traceonly

orcl> select count(*) from TESTTAB02;

Elapsed: 00:01:19.98

Execution Plan

----------------------------------------------------------

Plan hash value: 3686556234

------------------------------------------------------------------------

| Id | Operation | Name | Rows | Cost (%CPU)| Time |

------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | 3 (0)| 00:00:01 |

| 1 | SORT AGGREGATE | | 1 | | |

| 2 | TABLE ACCESS FULL| TESTTAB02 | 1 | 3 (0)| 00:00:01 |

------------------------------------------------------------------------

Statistics

----------------------------------------------------------

26 recursive calls

0 db block gets

630844 consistent gets

630808 physical reads

0 redo size

530 bytes sent via SQL*Net to client

511 bytes received via SQL*Net from client

2 SQL*Net roundtrips to/from client

5 sorts (memory)

0 sorts (disk)

1 rows processed

orcl>

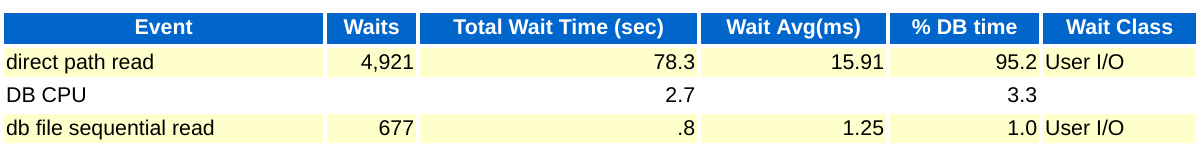

And here is the AWR for the second run:

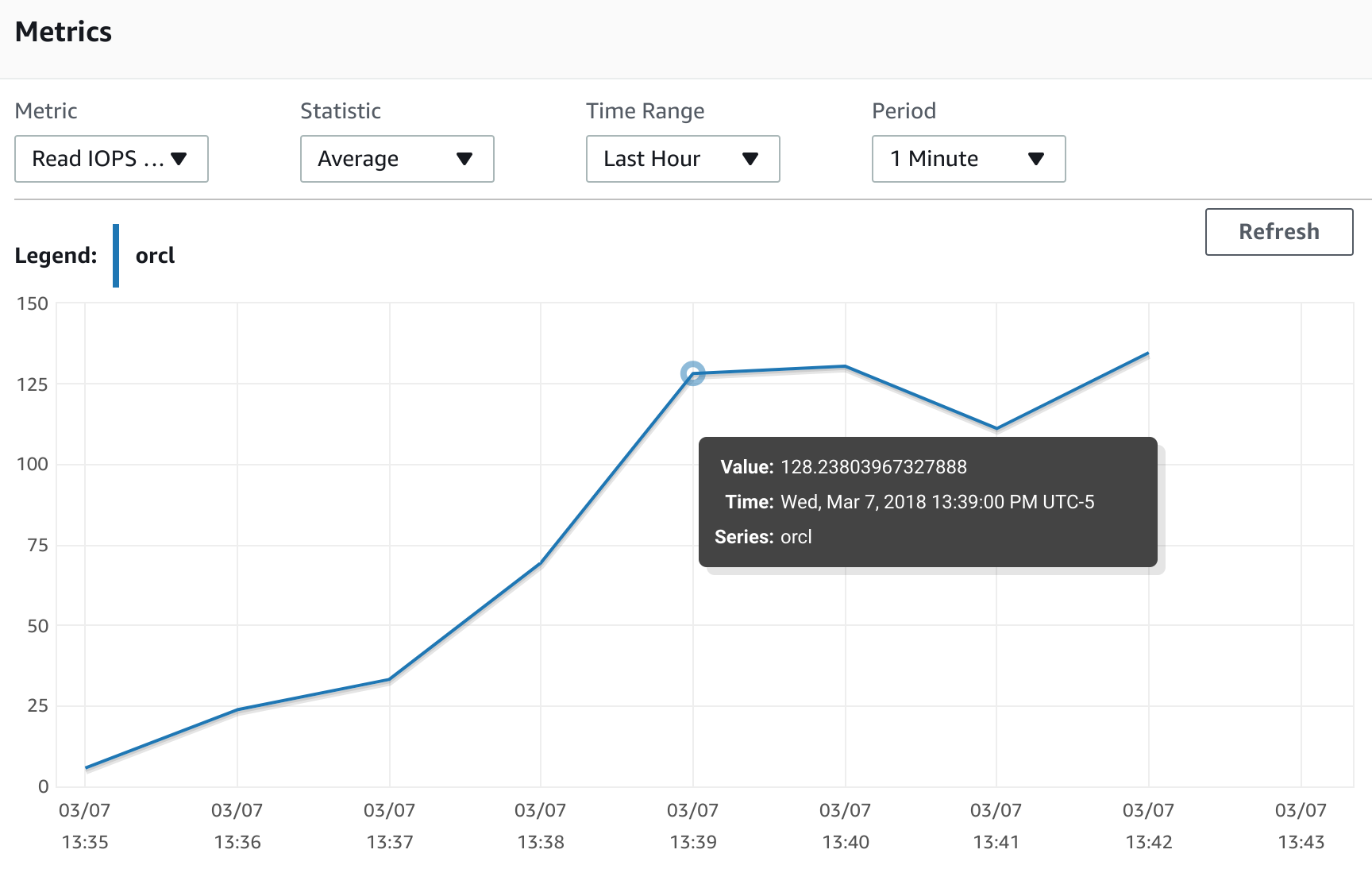

We see exactly the same number of physical reads but the time has dropped from 4:44 to 1:20 minutes. The query ran 3.5 times faster. And when we look at the AWR data we can see that 'direct path read' average wait time dropped from 68 minutes to 16 minutes. Also, we can compare the AWS monitoring graphs for the first run :

We see exactly the same number of physical reads but the time has dropped from 4:44 to 1:20 minutes. The query ran 3.5 times faster. And when we look at the AWR data we can see that 'direct path read' average wait time dropped from 68 minutes to 16 minutes. Also, we can compare the AWS monitoring graphs for the first run :

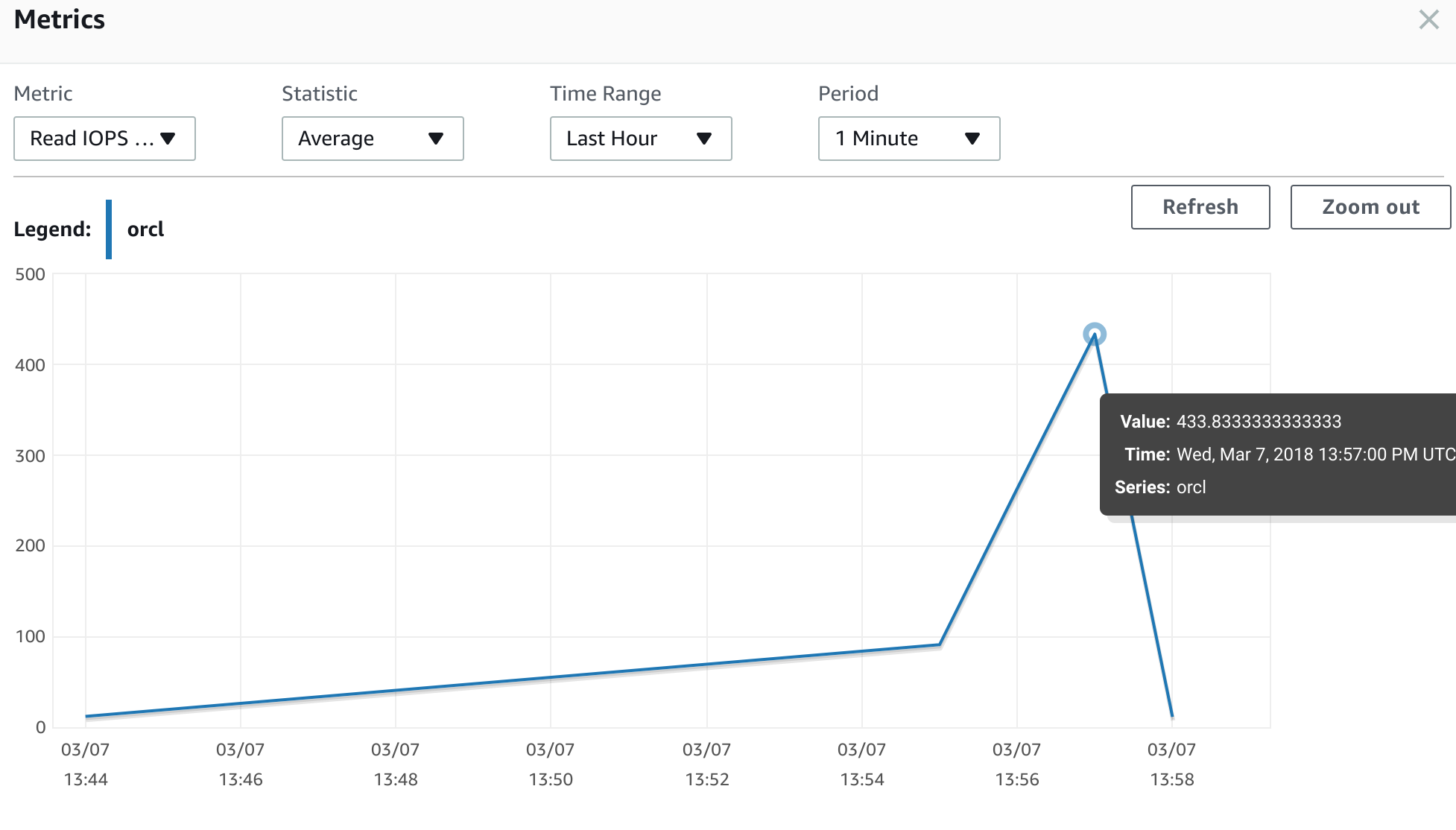

and for the second run:

and for the second run:

We clearly see that IOPS have increased from 128 read IOPS the first time to 433 read IOPS on the second run. It is more than three times more. It looks like the penalty is pretty high. Our IO performance dropped by almost 75 percent from normal after restoring from the snapshot. Considering that we have to be ready if we plan to do a production cutover using snapshot backups. Let's see what we can do about it. In the case of EBS volumes it is as simple as running "dd " command copying all the blocks from the volume to "/dev/null" on a Linux host. Of course it may take some time and in this case, knowing where the operational data is placed can reduce timing since you may not need to do it for an old or archived data. Unfortunately we cannot apply the same technique for RDS since we don't have direct access to the OS level and have to use SQL to read all data. We need not only read table data, but also indexes and any other segments like lob segments. To do so we may need to use a set of procedures to properly read all the necessary blocks at least once before going to production. As result, it can increase the cutover time or maybe lead to another migration strategy with a logical replication such as AWS DMS, Oracle GoldenGate, DBVisit or any similar tools.

We clearly see that IOPS have increased from 128 read IOPS the first time to 433 read IOPS on the second run. It is more than three times more. It looks like the penalty is pretty high. Our IO performance dropped by almost 75 percent from normal after restoring from the snapshot. Considering that we have to be ready if we plan to do a production cutover using snapshot backups. Let's see what we can do about it. In the case of EBS volumes it is as simple as running "dd " command copying all the blocks from the volume to "/dev/null" on a Linux host. Of course it may take some time and in this case, knowing where the operational data is placed can reduce timing since you may not need to do it for an old or archived data. Unfortunately we cannot apply the same technique for RDS since we don't have direct access to the OS level and have to use SQL to read all data. We need not only read table data, but also indexes and any other segments like lob segments. To do so we may need to use a set of procedures to properly read all the necessary blocks at least once before going to production. As result, it can increase the cutover time or maybe lead to another migration strategy with a logical replication such as AWS DMS, Oracle GoldenGate, DBVisit or any similar tools.

Share this

You May Also Like

These Related Stories

Adaptive Log File Sync: Oracle, Please Don't Do That Again

![]()

Adaptive Log File Sync: Oracle, Please Don't Do That Again

Oct 19, 2012 12:00:00 AM

8

min read

Getting started with Orchestrator

![]()

Getting started with Orchestrator

Jun 22, 2018 12:00:00 AM

5

min read

Welcome to New Zealand

Welcome to New Zealand

Dec 16, 2022 12:00:00 AM

3

min read

No Comments Yet

Let us know what you think