Dockerized PMM in production

Getting a PMM server running on docker is just matter of following a few simple steps. There are, however, some recommended additional steps when implementing a production, long-term deployment. This blog post will try to cover some of the most important ones, based on my experience with PMM so far.

Getting a PMM server running on docker is just matter of following a few simple steps. There are, however, some recommended additional steps when implementing a production, long-term deployment. This blog post will try to cover some of the most important ones, based on my experience with PMM so far.

PMM data storage

If you want your deployment to be a long term monitoring solution, you probably won’t want metrics and metadata to be stored in the root directory. By default, if you don't specify any docker volume-related parameters, your docker storage will live there: $ sudo docker inspect 9904e9e0c8a0 | jq .[].Mounts[].Source "/var/lib/docker/volumes/b69880600efbe950f46c34254db4be2ef6e1686c156aa21b13b2674a91187d53/_data" "/var/lib/docker/volumes/06b5c7a620271b3cfe7c4d6dd3071362eaa8e55eca502a4fdda03f2a446ef21c/_data" "/var/lib/docker/volumes/1232d1096783e14c8783d5c7cbb7863e1158bda5c7f95013761c6996f320da0a/_data" "/var/lib/docker/volumes/a86f9f43d9e11305c413fca0d4a2715e327f4b83e5732d7a17da02bb2e3d0c01/_data" To map your docker volumes with a different host path use docker bind mounts:

$ sudo docker create \ -v /pmmdata/prometheus/data:/opt/prometheus/data \ -v /pmmdata/consul-data:/opt/consul-data \ -v /pmmdata/mysql:/var/lib/mysql \ -v /pmmdata/grafana:/var/lib/grafana \ --name pmm-data \ percona/pmm-server:latest /bin/true The above syntax should work on any version of docker, although the behaviour is not completely the same for all versions:

- When using docker.io (ie. Centos < 7) bind mounts will hide any image existing files in the mount point. Since the MySQL data directory is not initialized as part of the entrypoint script, MySQL will fail to start. (seen on Ubuntu 16.04 with docker 1.13 and Centos 6.5 with docker 1.7). This behaviour was not observed on docker v17. To prevent MySQL instance failure, you basically need to copy the data directories to the final destination after they were initialized. To do so:

- Create the pmm-data container without mappings

- Create and start pmm-server container to initialize the data directory correctly

- Stop pmm-server and copy the files from the path on the root volume to the desired paths

- Create a new pmm-data container using the new paths

- Launch a new pmm-server container using the new pmm-data volumes

- On Centos, file privileges and owners set from within the container are visible from the host OS and may overlap with existing local or LDAP users.

$ sudo docker run --rm --volumes-from pmm-data -it percona/pmm-server:latest chown -Rv pmm:pmm /opt/prometheus/data /opt/consul-data $ sudo docker run --rm --volumes-from pmm-data -it percona/pmm-server:latest chown -Rv grafana:grafana /var/lib/grafana $ sudo docker run --rm --volumes-from pmm-data -it percona/pmm-server:latest chown -Rv mysql:mysql /var/lib/mysql

Memory, retention and space usage

By default, a docker container could access as much memory as the host’s kernel scheduler allows. In an environment where the host is shared with other containers or processes, you may want to limit the amount of memory PMM container can allocate. To do so in a standalone docker environment: $ sudo docker run --memory=4G .. Also by default, prometheus will allocate all memory available to the container for storing the most recently used data chunks. If the memory available to the container is limited, restrict prometheus memory usage for data chunks so it leaves enough memory for MySQL, consul and grafana. In the example below we are setting a limit of 2Gb. $ sudo docker run -e METRICS_MEMORY=2097152 .. Keep in mind that, as per the documentation, total memory usage by prometheus will be higher than that. You should set this parameter to roughly 2/3 of the expected total memory consumption. Retention is probably another setting you may want to check. By default, prometheus stores time-series data for 30 days, and QAN stores query data for 8 days. To change this defaults use the following variables (in hours): $ sudo docker run -e METRICS_RETENTION=4400h -e QUERIES_RETENTION=4400h ..

Of course, total space usage depends on the amount of monitored hosts, the retention period and even the metrics resolution. The default sample rate is 1 second. You could consider increasing METRICS_RESOLUTION (in seconds) to reduce the storage footprint:

$ sudo docker run -e METRICS_RESOLUTION=5s

Network connectivity

When installing PMM on an environment with restrictive connectivity between local hosts, I realized that Consul specifically requires access to the monitored hosts. The below pmm-admin option is very helpful for troubleshooting networking issues as it will report connectivity status in both ways. Consul page can be used on the server side too, under /consul/#/dc1 $ pmm-admin check-network PMM Network Status Server Address | 172.16.3.100:8080 Client Address | 172.16.3.1 * System Time NTP Server (0.pool.ntp.org) | 2018-03-06 14:09:48 +0000 UTC PMM Server | 2018-03-06 14:09:48 +0000 GMT PMM Client | 2018-03-06 14:09:48 +0000 UTC PMM Server Time Drift | OK PMM Client Time Drift | OK PMM Client to PMM Server Time Drift | OK * Connection: Client --> Server -------------------- ------- SERVER SERVICE STATUS -------------------- ------- Consul API OK Prometheus API OK Query Analytics API OK Connection duration | 121.734µs Request duration | 897.289µs Full round trip | 1.019023ms * Connection: Client <-- Server -------------- ---------------------- ------------------ ------- ---------- --------- SERVICE TYPE NAME REMOTE ENDPOINT STATUS HTTPS/TLS PASSWORD -------------- ---------------------- ------------------ ------- ---------- --------- linux:metrics remote.mysql.my.domain 172.16.3.1:42000 OK YES - mysql:metrics remote.mysql.my.domain 172.16.3.1:42002 OK YES - mysql:metrics remote.mysql.my.domain 172.16.3.1:42003 OK YES -

Remote MYSQL Monitoring

There are some situations in which having the PMM client local to the database server is not feasible/ desirable (client binaries availability for the guest OS, network traffic limitations, etc) In this situations, it is possible to monitor a MySQL instance through a non-local client. To configure a remote instance, specify all instance parameters when adding a MySQL.$ pmm-admin add mysql --disable-tablestats --host remote.mysql.my.domain --password redacted --user pmm remote_mysql You can still get Query analyzer to work if you are using version 5.6 or above and you choose performance schema as the source for the slow query data collection.

Web access authentication

Enabling authentication for your production server web console may be a good idea to prevent environment details from being accessed by unauthorized personnel. A very basic authentication mechanism can be enabled by using the following options: $ sudo docker run -e SERVER_USER=USER_NAME -e SERVER_PASSWORD=YOUR_PASSWORD ..

Final thoughts

As with any other technology, deployment guides may not always cover all aspects of a service and additional reading combined with a few real-life situations end up giving you those additional details needed for a production setup. This is my humble recopilation of the former for PMM and I hope it saves you some time.Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

Automation, PowerShell and Word Templates - let the technician do tech

Deploying a Private Cloud at Home — Part 2

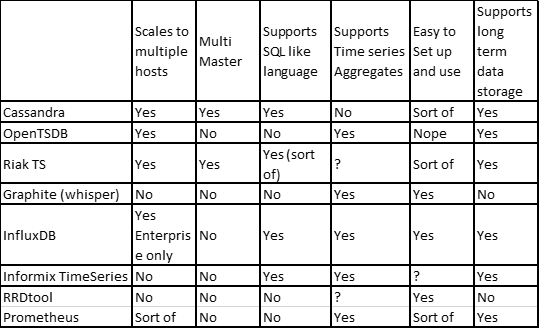

Cassandra as a time series database

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.