How to decipher oracle Internal datatype storage

What started out as an investigation into how the optimizer deals with predicates that are outside the known range of value became something else when I tried to determine just what Oracle believes low and high values of the range to be. I didn't expect to have anything to add to the topic, as it has been rather well covered; I just wanted to better understand it by creating a few examples that demonstrate what can happen.

As of yet, I have not yet gotten that far. One of the first things I wanted to know for this is what Oracle believes the low and high values to be. These can be seen in both DBA_TAB_COLUMNS and DBA_TAB_COL_STATISTICS in the LOW_VALUE and HIGH_VALUE columns. The DBA_TAB_COL_STATISTICS view is preferred, as these columns are maintained in DBA_TAB_COLUMNS only for backward compatibility with Oracle 7.

The Metadata Challenge: Understanding RAW Storage

SQL> desc dba_tab_col_statistics Name Null? Type ----------------------------------------- -------- ------------------------------------ OWNER VARCHAR2(128) TABLE_NAME VARCHAR2(128) COLUMN_NAME VARCHAR2(128) NUM_DISTINCT NUMBER LOW_VALUE RAW(1000) HIGH_VALUE RAW(1000) DENSITY NUMBER NUM_NULLS NUMBER NUM_BUCKETS NUMBER LAST_ANALYZED DATE SAMPLE_SIZE NUMBER GLOBAL_STATS VARCHAR2(3) USER_STATS VARCHAR2(3) NOTES VARCHAR2(63) AVG_COL_LEN NUMBER HISTOGRAM VARCHAR2(15) SCOPE VARCHAR2(7) The LOW_VALUE and HIGH_VALUE values are stored as RAW, so they must be using Oracle's internal storage format for whichever datatype the column consists of. Oracle does supply conversion routines via the DBMS_STATS package. These routines are deployed as procedures.

As Oracle 12c allows using functions defined in a SQL statement these procedures can be used in queries written for a 12c database. Using the DBMS_STATS conversion procedure in databases < 12c requires creating functions so that the values may be returned to a SQL statement. While that method will work, it is often not desirable, and may not even be possible, particularly in a production database. When I say 'not even be possible' what I mean is not that it cannot be done, but that doing so is probably not allowed in many databases.

Setting Up the Test Environment

To create a SQL statement that can show the high and low values, it will be necessary to use some other means. Let's start off by creating some data to work with.

define chars='ABCDEFGHIJKLMNOPQRSTUVWXYZ0123456789abcdefghijklmnopqrstuvwxyz' create table low_high as select id , mod(id,128) n1 , substr('&&chars',mod(id,42)+1, 20) c1 , sysdate-(mod(id,1000)+1) d1 from ( select level id from dual connect by level <= 128 * 1024 ) / exec dbms_stats.gather_table_stats(ownname => user, tabname => 'LOW_HIGH', method_opt => 'for all columns size auto') Observing the Native RAW Representation

Now that we have a table, let's take a look at the ranges of values. Note: I am using the _TAB_COLUMNS views for some queries just for simplification of the SQL for demonstration.

col low_value format a40 col high_value format a40 prompt prompt NUMERIC prompt select column_name, low_value, high_value from user_tab_columns where table_name = 'LOW_HIGH' and data_type = 'NUMBER' / prompt prompt VARCHAR2 prompt select column_name, low_value, high_value from user_tab_columns where table_name = 'LOW_HIGH' and data_type = 'VARCHAR2' / prompt prompt DATE prompt select column_name, low_value, high_value from user_tab_columns where table_name = 'LOW_HIGH' and data_type = 'DATE' / NUMERIC COLUMN LOW_VALUE HIGH_VALUE ---------- ---------------------------------------- ---------------------------------------- ID C102 C30E0B49 N1 80 C2021C VARCHAR2 COLUMN LOW_VALUE HIGH_VALUE ---------- ---------------------------------------- ---------------------------------------- C1 303132333435363738396162636465666768696A 666768696A6B6C6D6E6F70717273747576777879 DATE COLUMN LOW_VALUE HIGH_VALUE ---------- ---------------------------------------- ---------------------------------------- D1 7871030D121C04 78730C07121C04 Converting Numerics and Strings with UTL_RAW

Clearly the values being stored for LOW_VALUE and HIGH_VALUE are of little use to us in their current format. For the NUMBER and character data types (VARCHAR2, VARCHAR, CHAR) the package UTL_RAW can be used to get the actual values. Here is an example of converting some of these to a human readable format.

col low_value format 999999999999 col high_value format 999999999999 select column_name , utl_raw.cast_to_number(low_value) low_value , utl_raw.cast_to_number(high_value) high_value from user_tab_columns where table_name = 'LOW_HIGH' and data_type = 'NUMBER' / col low_value format a20 col high_value format a20 select column_name , utl_raw.cast_to_varchar2(low_value) low_value , utl_raw.cast_to_varchar2(high_value) high_value from user_tab_columns where table_name = 'LOW_HIGH' and data_type = 'VARCHAR2' / COLUMN LOW_VALUE HIGH_VALUE ------------------------------ ------------- ------------- N1 0 127 ID 1 131072 COLUMN LOW_VALUE HIGH_VALUE ------------------------------ -------------------- -------------------- C1 0123456789abcdefghij fghijklmnopqrstuvwxy These values can be verified, as shown here with the N1 column:

SQL> select min(n1), max(n1) from low_high; MIN(N1) MAX(N1) ---------- ---------- 0 127 Decoding the Internal Oracle DATE Format

So far I have done this only with these simple versions of these data types. Variations such as NVARCHAR2, BINARY_FLOAT and others may require different handling. What is missing? The DATE column has not yet been handled.

Converting the raw date format to a readable date is not so straightforward as there does not seem to be any conversion function available for that. First it will be necessary to know how Oracle stores a date in the database. Oracle's internal date format has been documented a number of times and is well known, such as in the following Oracle Support Note: How does Oracle store the DATE datatype internally? (Doc ID 69028.1) Oracle dates consist of seven parts:

- Century

- Year

- Month

- Day

- Hour

- Minute

- Second

The internal representation of this format can be seen by running the script in Example 1.

Example 1: dumping the internal date format

alter session set nls_date_format = 'mm/dd/yyyy hh24:mi:ss'; col today format a40 drop table t1; create table t1 as select sysdate today from dual; select to_char(today) today from t1 union select dump(today) today from t1; TODAY ---------------------------------------- 12/09/2015 13:13:57 Typ=12 Len=7: 120,115,12,9,14,14,58 The hour, minute and second are all stored in excess-1 notation, so 1 must be subtracted from them to get the correct time. Using excess-1 notation prevents a zero byte from being stored. The month and day are both stored with the actual value. The values for the century and year are stored in excess-100 notation. This means that 100 must be subtracted from the value before using it.

The following example demonstrates how the components of a date can be extracted from the information returned by the dump() function.

col cyear format 9999 col month format a2 col day format a2 col hour format 99 col minute format 99 col second format 99 select -- extract the century and year information from the -- internal date format -- century = (century byte -100) * 100 ( to_number( -- parse out integer appearing before first comma substr( startup_dump, 1, instr(startup_dump,',')-1) - 100 ) * 100 ) + -- year = year byte - 100 ( to_number( substr( startup_dump, -- get position of 2nd comma instr(startup_dump,',',2)+1, -- get position of 2nd comma - position of 1st comma instr(startup_dump,',',1,2) - instr(startup_dump,',',1,1) -1 ) ) - 100 ) cyear , substr( startup_dump, instr(startup_dump,',',1,2)+1, instr(startup_dump,',',1,3) - instr(startup_dump,',',1,2) -1 ) month , substr( startup_dump, instr(startup_dump,',',1,3)+1, instr(startup_dump,',',1,4) - instr(startup_dump,',',1,3) -1 ) day , to_number(substr( startup_dump, instr(startup_dump,',',1,4)+1, instr(startup_dump,',',1,5) - instr(startup_dump,',',1,4) -1 ))-1 hour , to_number(substr( startup_dump, instr(startup_dump,',',1,5)+1, instr(startup_dump,',',1,6) - instr(startup_dump,',',1,5) -1 ))-1 minute , to_number(substr( startup_dump, instr(startup_dump,',',1,6)+1 ))-1 second from ( -- return just the date bytes from the dump() select substr(dump(startup_time),15) startup_dump from v$instance ) a / CYEAR MO DA HOUR MINUTE SECOND ----- -- -- ---- ------ ------ 2015 11 18 17 33 32 Note: the internal format for SYSDATE is not the same as dates stored in a table. This is also true for TIMESTAMP and SYSTIMESTAMP.

A Unified SQL Solution for Human-Readable Statistics

So, now we can make use of this to examine the values Oracle stores to bind the ranges of columns, this time including the DATE columns.

col low_value format a20 col high_value format a20 col table_name format a10 head 'TABLE' col data_type format a20 col column_name format a6 head 'COLUMN' set linesize 200 trimspool on set pagesize 60 select us.table_name, uc.data_type, us.column_name, case when uc.data_type in ('VARCHAR2','VARCHAR','CHAR') then utl_raw.cast_to_varchar2(us.low_value) when uc.data_type = 'NUMBER' then to_char(utl_raw.cast_to_number(us.low_value) ) when uc.data_type = 'DATE' then -- extract the century and year information from the -- internal date format -- century = (century byte -100) * 100 to_char(( to_number( -- parse out integer appearing before first comma substr( substr(dump(us.low_value),15), 1, instr(substr(dump(us.low_value),15),',')-1) - 100 ) * 100 ) + -- year = year byte - 100 ( to_number( substr( substr(dump(us.low_value),15), -- get position of 2nd comma instr(substr(dump(us.low_value),15),',',2)+1, -- get position of 2nd comma - position of 1st comma instr(substr(dump(us.low_value),15),',',1,2) - instr(substr(dump(us.low_value),15),',',1,1) -1 ) ) - 100 )) --current_year || '-' || lpad( substr( substr(dump(us.low_value),15), instr(substr(dump(us.low_value),15),',',1,2)+1, instr(substr(dump(us.low_value),15),',',1,3) - instr(substr(dump(us.low_value),15),',',1,2) -1 ) -- month ,2,'0' ) || '-' || lpad( substr( substr(dump(us.low_value),15), instr(substr(dump(us.low_value),15),',',1,3)+1, instr(substr(dump(us.low_value),15),',',1,4) - instr(substr(dump(us.low_value),15),',',1,3) -1 ) -- day ,2,'0' ) || ' ' || lpad( to_char(to_number( substr( substr(dump(us.low_value),15), instr(substr(dump(us.low_value),15),',',1,4)+1, instr(substr(dump(us.low_value),15),',',1,5) - instr(substr(dump(us.low_value),15),',',1,4) -1 ) )-1) ,2,'0' ) -- hour || ':' || lpad( to_char( to_number( substr( substr(dump(us.low_value),15), instr(substr(dump(us.low_value),15),',',1,5)+1, instr(substr(dump(us.low_value),15),',',1,6) - instr(substr(dump(us.low_value),15),',',1,5) -1 ) )-1 ) ,2,'0' ) -- minute || ':' || lpad( to_char( to_number( substr( substr(dump(us.low_value),15), instr(substr(dump(us.low_value),15),',',1,6)+1 ) )-1 ) ,2,'0' ) --second else 'NOT SUPPORTED' end low_value, -- get the high value case when uc.data_type in ('VARCHAR2','VARCHAR','CHAR') then utl_raw.cast_to_varchar2(us.high_value) when uc.data_type = 'NUMBER' then to_char(utl_raw.cast_to_number(us.high_value) ) when uc.data_type = 'DATE' then -- extract the century and year information from the -- internal date format -- century = (century byte -100) * 100 to_char(( to_number( -- parse out integer appearing before first comma substr( substr(dump(us.high_value),15), 1, instr(substr(dump(us.high_value),15),',')-1) - 100 ) * 100 ) + -- year = year byte - 100 ( to_number( substr( substr(dump(us.high_value),15), -- get position of 2nd comma instr(substr(dump(us.high_value),15),',',2)+1, -- get position of 2nd comma - position of 1st comma instr(substr(dump(us.high_value),15),',',1,2) - instr(substr(dump(us.high_value),15),',',1,1) -1 ) ) - 100 )) --current_year || '-' || lpad( substr( substr(dump(us.high_value),15), instr(substr(dump(us.high_value),15),',',1,2)+1, instr(substr(dump(us.high_value),15),',',1,3) - instr(substr(dump(us.high_value),15),',',1,2) -1 ) -- month ,2,'0' ) || '-' || lpad( substr( substr(dump(us.high_value),15), instr(substr(dump(us.high_value),15),',',1,3)+1, instr(substr(dump(us.high_value),15),',',1,4) - instr(substr(dump(us.high_value),15),',',1,3) -1 ) -- day ,2,'0' ) || ' ' || lpad( to_char(to_number( substr( substr(dump(us.high_value),15), instr(substr(dump(us.high_value),15),',',1,4)+1, instr(substr(dump(us.high_value),15),',',1,5) - instr(substr(dump(us.high_value),15),',',1,4) -1 ) )-1) ,2,'0' ) -- hour || ':' || lpad( to_char( to_number( substr( substr(dump(us.high_value),15), instr(substr(dump(us.high_value),15),',',1,5)+1, instr(substr(dump(us.high_value),15),',',1,6) - instr(substr(dump(us.high_value),15),',',1,5) -1 ) )-1 ) ,2,'0' ) -- minute || ':' || lpad( to_char( to_number( substr( substr(dump(us.high_value),15), instr(substr(dump(us.high_value),15),',',1,6)+1 ) )-1 ) ,2,'0' ) --second else 'NOT SUPPORTED' end high_value from all_tab_col_statistics us join all_tab_columns uc on uc.owner = us.owner and uc.table_name = us.table_name and uc.column_name = us.column_name and us.owner = USER and us.table_name = 'LOW_HIGH' order by uc.column_id / TABLE DATA_TYPE COLUMN LOW_VALUE HIGH_VALUE ---------- -------------------- ------ -------------------- -------------------- LOW_HIGH NUMBER ID 1 131072 LOW_HIGH NUMBER N1 0 127 LOW_HIGH VARCHAR2 C1 0123456789abcdefghij fghijklmnopqrstuvwxy LOW_HIGH DATE D1 2013-03-13 17:27:03 2015-12-07 17:27:03 4 rows selected. Verify the D1 column values

SQL> select min(d1) min_d1, max(d1) max_d1 from low_high; MIN_D1 MAX_D1 ------------------- ------------------- 2013-03-13 17:27:03 2015-12-07 17:27:03 1 row selected. And there you have it. We can now see in human readable form the low and high values that Oracle has stored for each column. While it is a rather complex SQL statement, it really is not difficult to understand once you know the purpose behind.

The beauty of this script is that no functions or procedures need to be created to make use of it. If you would like add TIMESTAMP or any other value to the script, please, do so! The SQL can be found here in the [Low-High GitHub repo]. Now that the values can be viewed, the next task will be to put the script to use by using some examples to see how Oracle handles predicates outside the known range of values.

Oracle Database Consulting Services

Ready to optimize your Oracle Database for the future?

Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

OEM 13c Monitoring Features - Part 4, Corrective Actions

Making existing SQLPLUS Scripts 12c and Container DB (PDB) compatible

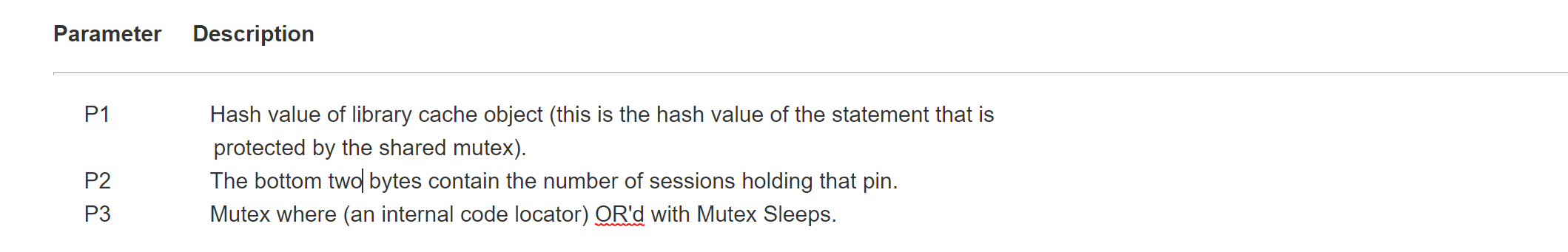

Reducing Contention on Hot Cursor Objects (Cursor: Pin S)

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.