Availability Group - Delay with Listener Connectivity After Failover

The beauty of working for multiple clients from different industries is that you get exposed to a myriad of environment setups and configurations. Every company has its own standards for network and server configuration, as well as different hardware vendors. This introduces its own kinks and excitement to your everyday work—half of which you'll likely not encounter if you are working in-house and using the same hardware.

The past week we encountered a rare and interesting issue with a High Availability (HA) Group. The issue was two-fold: first, it was initially not failing over automatically on one node (though that was not as exciting as the second part); second, when it was able to failover correctly, our client was experiencing delays with the availability of the Listener Name outside its own subnet after failover, automatic or otherwise.

It is reachable within its own subnet but takes more than thirty minutes to be reachable outside of it, even though the failover happened smoothly and without error.

1. Resolving DNS Registration Failures

The first part was fairly straightforward. Checking on the cluster logs and event logs, the automatic failover was throwing the error below when trying to failover on one of the nodes:

Cluster network name resource 'Listener_DNS_NAME' failed registration of one or more associated DNS name(s) for the following reason: DNS operation refused. Ensure that the network adapters associated with dependent IP address resources are configured with at least one accessible DNS server.

Fixing Active Directory Permissions

The error is as it says: the Computer object does not have the appropriate permissions on the Domain to register the DNS Name Resource for the Listener. For the cluster to perform this operation smoothly, "Authenticated Users" should have read/write all permissions on the Computer Object for the cluster, its nodes, and the Listener DNS Name.

To do this:

- Log in to the Active Directory Server.

- Open Active Directory Users and Computers.

- On the View menu, select Advanced Features.

- Right-click the object and then click Properties.

- On the Security tab, click Advanced to view all of the permission entries that exist for the object.

- Verify that the Authenticated Users is in the list and has the permission to Read and Write All. Add the required permissions then save the changes.

2. Addressing Kerberos and SPN Errors

Now after doing that and testing the failover, it is now encountering a different error—a Kerberos-related one showed below:

The Kerberos client received a KRB_AP_ERR_MODIFIED error from the server ComputerName$. The target name used was HTTP/ComputerName.Domain.com. This indicates that the target server failed to decrypt the ticket provided by the client. This can occur when the target server principal name (SPN) is registered on an account other than the account the target service is using.

Configuring the Service Principal Name (SPN)

Ah, the often overlooked SPN. This should be part of your installation process—setting the SPN. To keep the story short, you can refer here for the detailed instructions on how to configure the SPN for SQL Server. Aside from registering the SPN for each of the nodes, you'll also need to register the SPN for the Listener (assuming 1433 is the port used):

setspn -A MSSQLSvc/Listener_DNS_NAME.Domain.com:1433 DOMAIN/SQLServiceAccount

This will enable Kerberos for the client connection to the Availability Group Listener and address the errors we received above.

3. The "Aha!" Moment: MAC Address Conflicts and GARP

After configuring the SPN for the servers, automatic failover is now running smoothly—or so we thought. The client came back to us stating it was taking some time for the application to connect to the Listener Name.

Ping tests within the database subnet were successful, but ping tests outside of it were timing out. It takes more than thirty minutes for the name to be reachable outside of the database subnet. After involving the Network Admin, we found out that a MAC Address conflict was happening.

Understanding Gratuitous ARP (GARP)

Windows 2003 servers and later issue a Gratuitous ARP (GARP) request during failover. There are some switches/devices that do not forward Gratuitous ARP by default. This causes the devices on the other end of the switch to not have the correct MAC address associated with the Name. It often corrects itself when the router detects the failure, performs a broadcast, and gets the correct value—hence the 30-minute delay.

4. Final Resolution: Registry Tuning for ARP

To address this, changes must be done on the configuration of the switches (check with your hardware vendor). However, even after enabling the switch to forward GARP, we found that the server itself was not sending a GARP request. This is a server configuration issue and requires Registry changes.

Open the Registry for the server and locate the key below: HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services\Tcpip\Parameters

From there, check if there is a key for ArpRetryCount. If there is, make sure the value is not set to 0. The value should be between 0-3.

After changing this and restarting the servers, everything works perfectly. These last two issues are a bit rare—something I wouldn't have experienced if the client wasn't using that particular hardware and that specific standard configuration.

SQL Server Consulting Services

Ready to future-proof your SQL Server investment?

Share this

Share this

More resources

Learn more about Pythian by reading the following blogs and articles.

Learn how to optimize text searches in SQL Server 2014 by using Full-Text Search - part 1

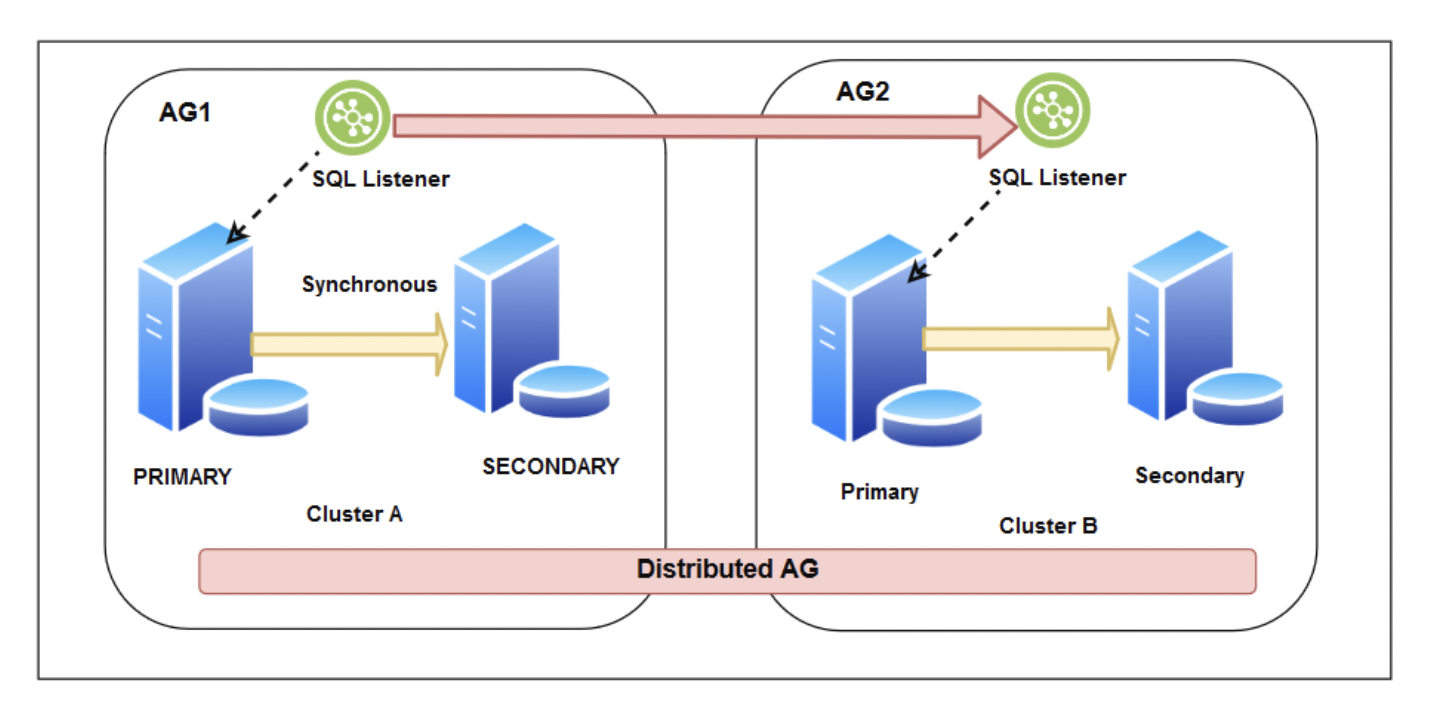

DISTRIBUTED ALWAYS ON

Conventions Make for Easy Automation

Ready to unlock value from your data?

With Pythian, you can accomplish your data transformation goals and more.